Change saturation has become one of the most searched concepts in change management practice – and one of the most inconsistently understood. In its simplest definition, change saturation occurs when the cumulative demand of concurrent change programmes on a specific employee group exceeds that group’s adaptive capacity. The employees in question do not simply slow down in their adoption of any individual change. They enter a qualitatively different state in which their willingness and ability to engage with any further change demand is fundamentally reduced. This state – characterised by fatigue, cynicism, and disengagement – is what distinguishes change saturation from ordinary change challenge, and it is why measuring it accurately matters for how organisations manage their change portfolios.

The problem is that most organisations measure change saturation using subjective methods – asking managers or employees whether they feel “overloaded,” collecting anecdotal feedback in town halls, or relying on pulse survey questions that do not produce data comparable across teams or time periods. These approaches are better than nothing, but they produce results that are difficult to act on because they cannot be disaggregated by programme, by employee group, or by change type. They tell an organisation that saturation is a problem. They do not tell it where, why, or what to do about it.

A more structured approach – a measurement recipe that produces actionable, comparable data – is what effective change saturation management requires. Download the Change Saturation Assessment Recipe for a step-by-step guide to measuring change saturation using The Change Compass.

Why personal opinion is an unreliable saturation measure

The instinct to measure change saturation through personal opinion – asking people whether they feel overwhelmed – has an obvious appeal. People experiencing saturation know it. Their self-report seems like direct access to the phenomenon being measured. The problem is that self-reported saturation is systematically biased in ways that make it unreliable for portfolio management decisions.

The first bias is social desirability. Employees who are experiencing genuine saturation may not report it accurately in formal measurement contexts if they believe reporting saturation will reflect negatively on their resilience or capability, or if they believe the organisation is not genuinely open to reducing the change load. In cultures where maintaining a positive front through adversity is valued, saturation is consistently underreported through self-report mechanisms.

The second bias is anchoring. Employees’ assessment of their saturation is relative to their recent experience. A team that has been operating at high saturation for an extended period may rate their current state as normal – because it is normal for them – even though it would be rated as high saturation by an objective measure. Conversely, a team that has recently experienced a significant increase in change load may rate themselves as highly saturated even if their objective load is within a manageable range, simply because the change from their recent baseline feels dramatic.

The third bias is aggregation. Even when individual self-reports are reasonably accurate, aggregating them across teams produces a misleading picture because the teams most likely to underreport saturation – those with the most competitive cultures, the most pressure to appear capable – are also those most likely to be genuinely saturated. The aggregate measure therefore understates saturation precisely where it is most severe.

The components of a structured saturation measurement approach

An effective change saturation measurement recipe builds the saturation assessment from objective components rather than deriving it from subjective opinion. The core components are: the volume of change programmes affecting a specific employee group, the intensity of those impacts (how much behavioural shift each change requires), the timing concentration of those impacts (how many significant changes are happening simultaneously versus sequenced), and a capacity baseline against which the aggregate load can be assessed.

Volume is the most commonly measured dimension – it is what heatmaps capture. But volume alone is insufficient, for the reasons described in change measurement literature. A single high-intensity change requiring employees to completely redesign their workflows is a fundamentally different saturation driver than five low-intensity changes requiring minor process adjustments. A measurement approach that counts changes without weighting them by intensity will misclassify teams’ saturation risk: overestimating the saturation of teams with many minor changes and underestimating it for teams with fewer but more transformative ones.

Prosci’s ADKAR model provides a useful framework for thinking about impact intensity – the degree to which a change requires employees to develop new knowledge, new capability, and new habitual behaviours, as distinct from simply being aware that something has changed. Changes that require new knowledge and capability development impose a substantially higher saturation load than those that require awareness and comprehension only. Structuring impact assessment around these ADKAR dimensions allows intensity to be captured in a way that reflects the actual cognitive and behavioural demand on employees.

Establishing capacity baselines and thresholds

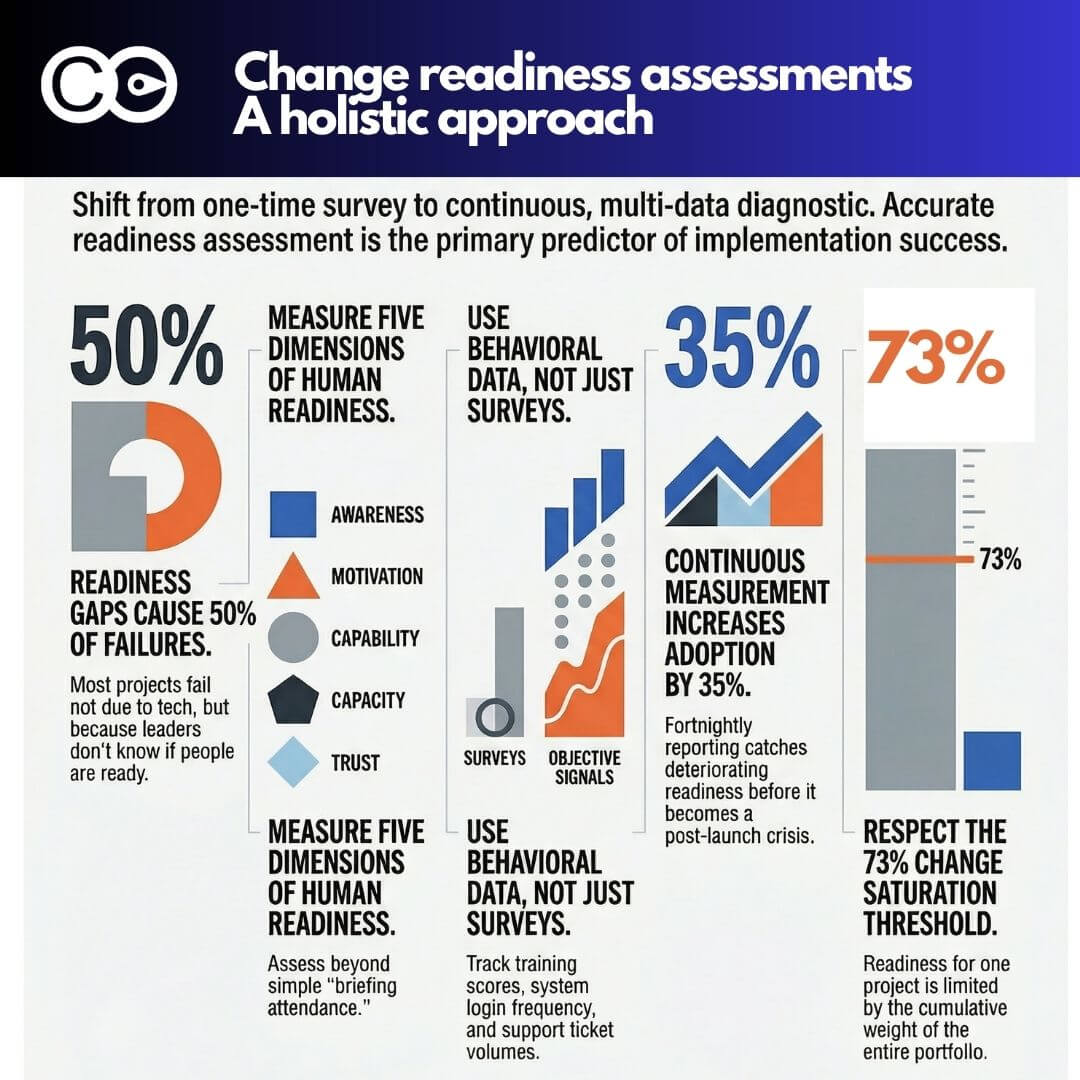

Saturation is a relative concept – it describes the relationship between demand and capacity, not demand alone. Measuring demand without reference to capacity produces a number with no meaning. The second essential component of a structured saturation measurement recipe is a capacity baseline: an estimate of how much change demand a specific employee group can absorb sustainably over a defined period.

Capacity baselines can be established from multiple sources. Research-derived benchmarks – the published estimates of sustainable change load from organisations like Gartner and Prosci – provide starting points that can be calibrated to the specific context. Historical data – the correlation between past change load levels and subsequent adoption rates, attrition data, and engagement score movements – provides an empirical basis for establishing what level of change demand has historically been sustainable for specific employee groups in this organisation. And contextual factors – the current operational pressure on a team, their recent change history, their access to change support resources – adjust the baseline upward or downward based on factors the generic benchmarks do not capture.

Gartner research on change fatigue provides one of the most widely referenced frameworks for understanding capacity thresholds – specifically the finding that the average employee can effectively absorb a limited number of concurrent major changes before saturation occurs. Using this research as a calibration reference, combined with organisational-specific data, allows change leaders to establish saturation thresholds that are both research-grounded and contextually valid.

From measurement to actionable recommendations

The purpose of change saturation measurement is not to produce a number. It is to produce recommendations that stakeholders can act on. The measurement recipe therefore needs to specify not just how to assess saturation but how to translate the assessment into specific governance decisions and operational interventions.

At the governance level, saturation data should inform three types of decision: sequencing decisions (should this programme’s implementation be deferred because the affected teams are currently at or near their saturation threshold?), descoping decisions (can this programme be redesigned to reduce its saturation impact on the most overloaded employee groups without materially compromising its intended outcomes?), and resourcing decisions (does this programme require additional change support investment because the teams it is landing on have limited remaining adaptive capacity?).

At the programme level, saturation data should inform stakeholder engagement prioritisation (which teams need the most intensive support?), communication design (what communication approach is appropriate for teams in a high-saturation state versus those with ample capacity?), and the structure of transition support (what is the right blend of training, peer support, manager coaching, and post-go-live stabilisation for teams at different saturation levels?).

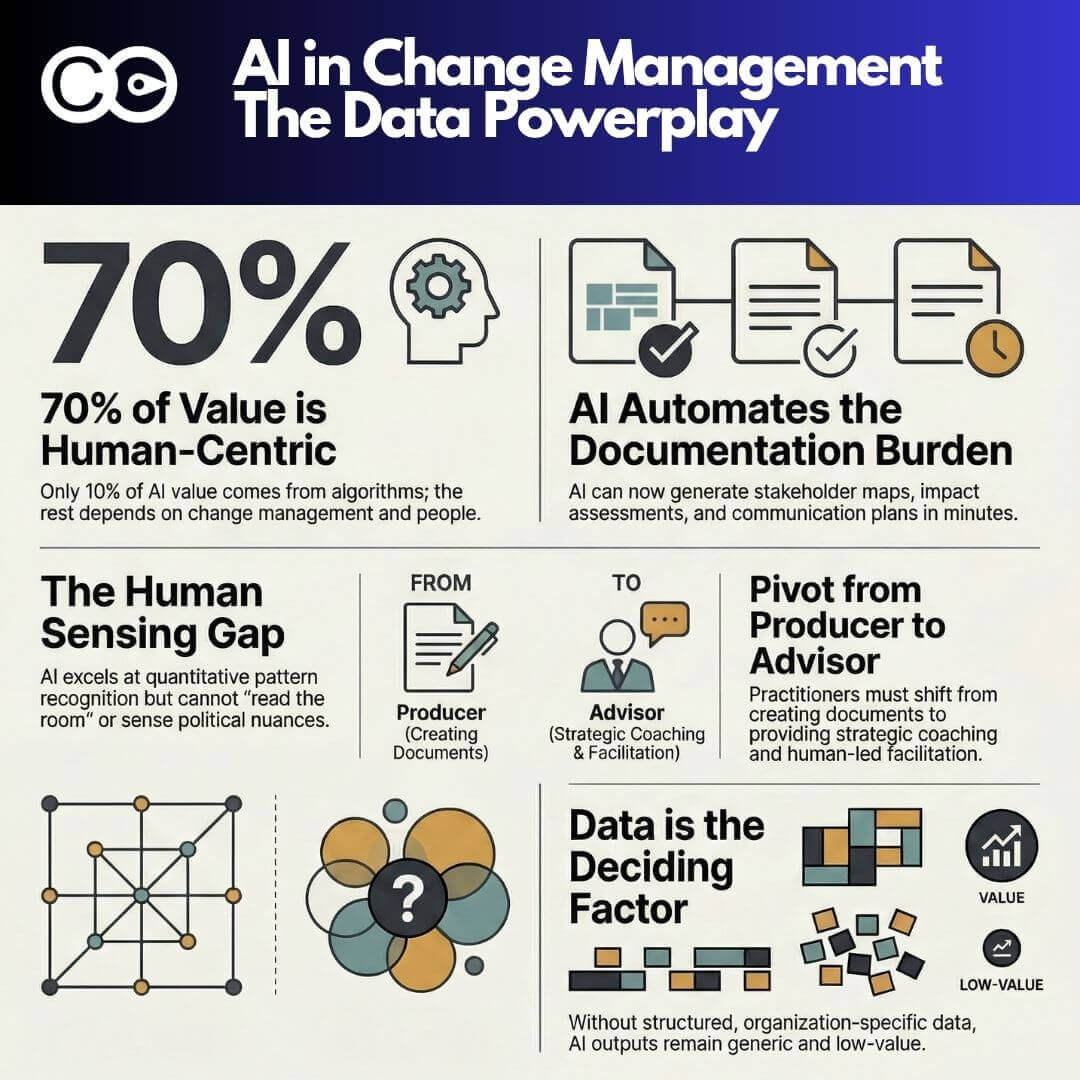

Platforms like The Change Compass support the full saturation measurement recipe by providing the data infrastructure – structured impact collection, portfolio aggregation by employee group, and visualisation of saturation against capacity thresholds – that makes this analysis operationally viable. Rather than assembling the measurement manually from programme-level spreadsheets, change leaders can access the saturation picture in real time and model the saturation implications of proposed portfolio decisions before committing to them.

Common mistakes in change saturation measurement

Several recurring errors undermine change saturation measurement efforts even in organisations that have invested in structured approaches. The first is measuring saturation at the wrong level of granularity. A division-level saturation score conceals the variation between teams within that division – a team experiencing extreme saturation may be averaged out by adjacent teams with much lighter loads, producing a comfortable aggregate that masks a genuine crisis at the team level. Effective saturation measurement requires the resolution to be at the team or role group level, not the business unit level.

The second mistake is measuring saturation at a single point in time rather than tracking it over a rolling period. A team that appears to be within its capacity threshold today may be accumulating load from changes that are about to peak simultaneously in the next quarter. Saturation measurement that shows only the current state rather than the projected trend line provides insufficient warning for the governance decisions that require lead time to implement.

The third mistake is treating the saturation assessment as separate from the portfolio governance process. Saturation data that is produced and then not connected to a decision-making process – where the data sits in a report that no governance body is empowered to act on – is not a management tool. It is a documentation exercise. McKinsey research on change programme failure consistently identifies the absence of in-flight decision authority as a primary cause of poor change outcomes – the data exists but no one has the authority or the process to act on what it shows. Connecting saturation measurement to governance structures with real authority to defer, descope, or resource programmes accordingly is what converts measurement from a reporting activity into a management capability.

Frequently asked questions

What is change saturation and how is it measured?

Change saturation occurs when the cumulative demand of concurrent change programmes on a specific employee group exceeds that group’s adaptive capacity. It is measured by combining three components: the volume of changes affecting the group, the intensity of those changes (the degree of behavioural shift each requires), and the timing concentration (how many significant changes overlap simultaneously). This demand measure is then compared against a capacity baseline to determine whether the group is operating within, at, or above its saturation threshold. Subjective self-report alone is insufficient as a saturation measure due to systematic biases in how saturation is perceived and reported.

How do you establish a capacity baseline for change saturation measurement?

Capacity baselines can be established from published research benchmarks (such as Gartner’s research on change fatigue and sustainable change load), from historical organisational data showing the relationship between past change load levels and adoption outcomes, and from contextual calibration factors such as the current operational pressure on the team, their recent change history, and their access to change support. The most reliable baselines combine all three sources, using the research as a starting point and calibrating it to the specific organisational context.

What decisions should change saturation data inform?

At the portfolio governance level, saturation data should inform decisions about programme sequencing (deferring changes to groups at or near saturation), descoping (reducing impact intensity for overloaded groups), and resourcing (allocating additional change support to high-saturation teams). At the programme level, it should inform stakeholder engagement prioritisation, communication design, and the structure of transition support. Saturation measurement that is not connected to a governance process with authority to act on its findings is a reporting activity rather than a management tool.

Why is team-level granularity important in change saturation measurement?

Business unit or division-level saturation scores conceal the variation between teams within those units. A team experiencing extreme saturation may be averaged out by adjacent teams with much lighter loads, producing an apparently comfortable aggregate score that masks a genuine crisis at the team level. Effective saturation measurement requires team or role group-level granularity to surface the concentrated saturation patterns that require targeted management responses and that business unit aggregates systematically obscure.

References

- Prosci, “ADKAR: A Model for Change in Business, Government and Our Community”

- Gartner, “This New Strategy Could Be Your Ticket to Change Management Success”

- McKinsey, “Why Change Management Programs Fail”