Agile has become the technical operating model for large organisations. You'll find Scrum teams in finance, Kanban boards in HR, Scaled Agile frameworks spanning entire technology divisions. The velocity and responsiveness are real. What's also becoming real, though...

Latest Blogs •

Why Iterative, Agile Change Management Succeeds Where Linear Approaches Fail – Research Findings

Change management has long operated on assumptions. Traditional linear models as a part of a change management process were built on the premise that if you follow the steps correctly, organisational transformation will succeed. But in recent years, large-scale...

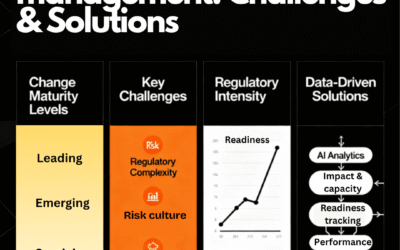

Successful change management in financial services: Drive transformation with agility

The pressure is relentless. Regulators demand compliance with new directives. Customers expect digital experiences rivalling fintech disruptors. Shareholders want innovation without compromising stability. Meanwhile, legacy infrastructure groans under the weight of...

The basics of agile change – the Agile Manifesto principles

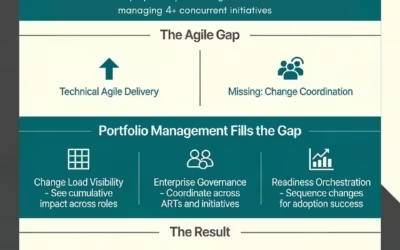

Without a clear oversight of a collection of changes that are constantly moving. It is almost impossible even with agile to effectively lead and embed changes effectively.

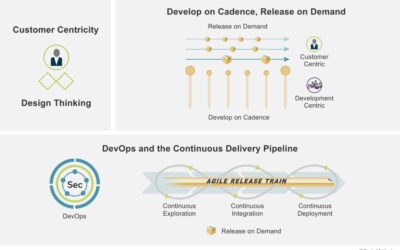

program implement planning

A Guide on Integrating Change Management with Scaled Agile for Seamless Product Delivery – Part 1by | Agile, Guides, UncategorizedThe need for organizations to remain flexible and responsive to market demands has never been more critical, and scaled agile (SAFe)...

How to use gamification for change: Your guide

Gamification is the application of game mechanics and elements to non-game activities, greatly enhancing the player experience. Whilst gamification has been around for a long time, it is only recently that it has been formalised as a structured method to achieve...

Top Five Agile Change Management Plan Toolkits You Need

What are the key components of an agile change management plan?An agile change management strategy process includes key components such as a clear vision for change, stakeholder engagement strategies, iterative feedback loops, and adaptable processes. These elements...

Use this agile technique little known in change management to get the best outcomes

The MoSCoW method of prioritization is well used by Business Analysts, Project Managers, and Software Developers in software development. The focus is on identifying and agreeing with key stakeholders what are the core levels of requirements that should be focused on...

Master Change Agility for Successful Change Management

In today’s dynamic business environment, marked by ongoing disruptions like environmental challenges, economic shifts, and the rapid advancement of AI tools, the pace of change demands that organizational agility and change readiness become critical capabilities for...

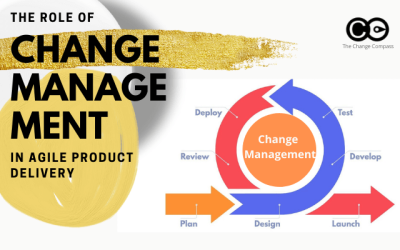

What you didn’t know about change in agile product delivery

So what is the role that change managers play in managing product changes during the agile product delivery process? What are the actions, approaches, deliverables and considerations required for change professionals in an agile environment? So what is the role that...

The modern change management process: why iterative beats linear every time

In 2022, the average employee experienced ten planned enterprise changes simultaneously. In 2016, that number was two. Gartner's research on change fatigue documents what happened next: employee willingness to support change collapsed from 74% to 43% in the same...

Why change management is omitted from agile methodology

Agile methodology is fast becoming the ‘norm’ when it comes to project methodology. There are strong benefits promised of faster development time, the ability to morph with changing requirements, less time required to implement the solution, and a better ability to...