Designing a change adoption dashboard: what to measure, how to display it, and why it matters

A change adoption dashboard is one of the most underused tools in enterprise change management. Most organisations produce some form of change tracking, but relatively few have a dashboard that is genuinely decision-ready: one that tells programme sponsors and business leaders not just what activities have been completed, but whether the change is actually embedding in the way people work.

The difference between a change activity tracker and a change adoption dashboard is the difference between measuring what the change team has done and measuring whether the change is working. Getting that distinction right is what determines whether your dashboard becomes a governance instrument that drives action, or a reporting artefact that gets reviewed once a month and promptly ignored.

This guide sets out how to design an effective change adoption dashboard: the metrics to include at each stage of the change lifecycle, how to present them to different audiences, and how to use the dashboard to drive better decisions about where change support needs to be directed.

Why most change tracking falls short

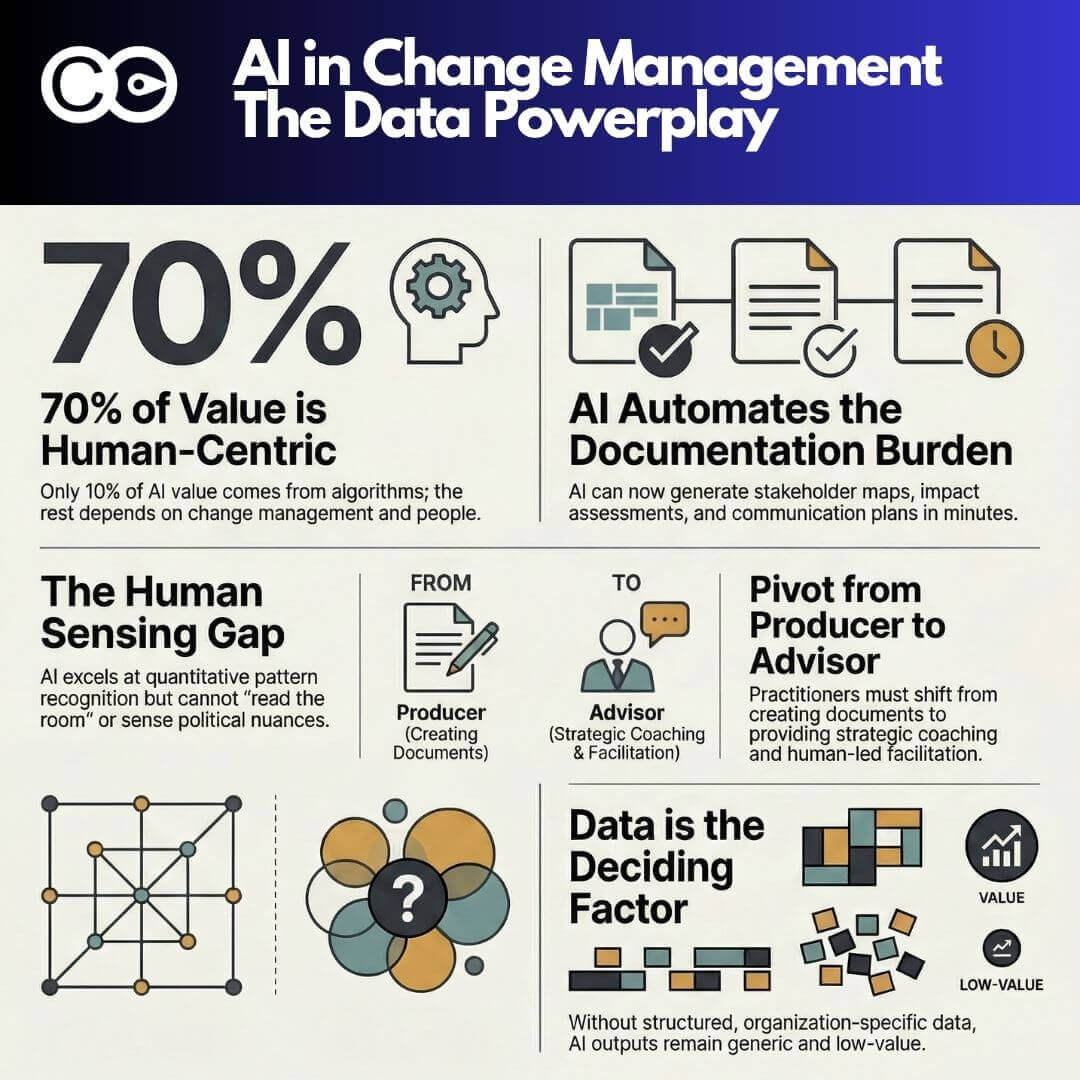

The most common failure mode in change measurement is tracking outputs rather than outcomes. A standard change plan tracks activities: how many communications have been sent, how many training sessions have been delivered, how many stakeholder meetings have been held. These outputs matter as indicators of delivery, but they tell you almost nothing about whether the change is landing.

Prosci’s best practice research on change management metrics identifies three distinct levels at which change should be measured: organisational performance (did we achieve the intended business results?), individual performance (are employees adopting and using the new way of working?), and change management performance (did we execute the change activities well?). Most organisations only measure the third level. A change adoption dashboard needs to cover all three.

The second common failure is building a dashboard that is designed for the change team rather than for the business. If the only people reading the dashboard are the programme change manager and their direct team, it has limited governance value. An effective dashboard needs to be useful to programme sponsors, business unit leaders, and transformation governance committees, which means it needs to be readable in under five minutes and surfacing the questions and decisions that those audiences actually need to make.

The change adoption lifecycle and when to measure each stage

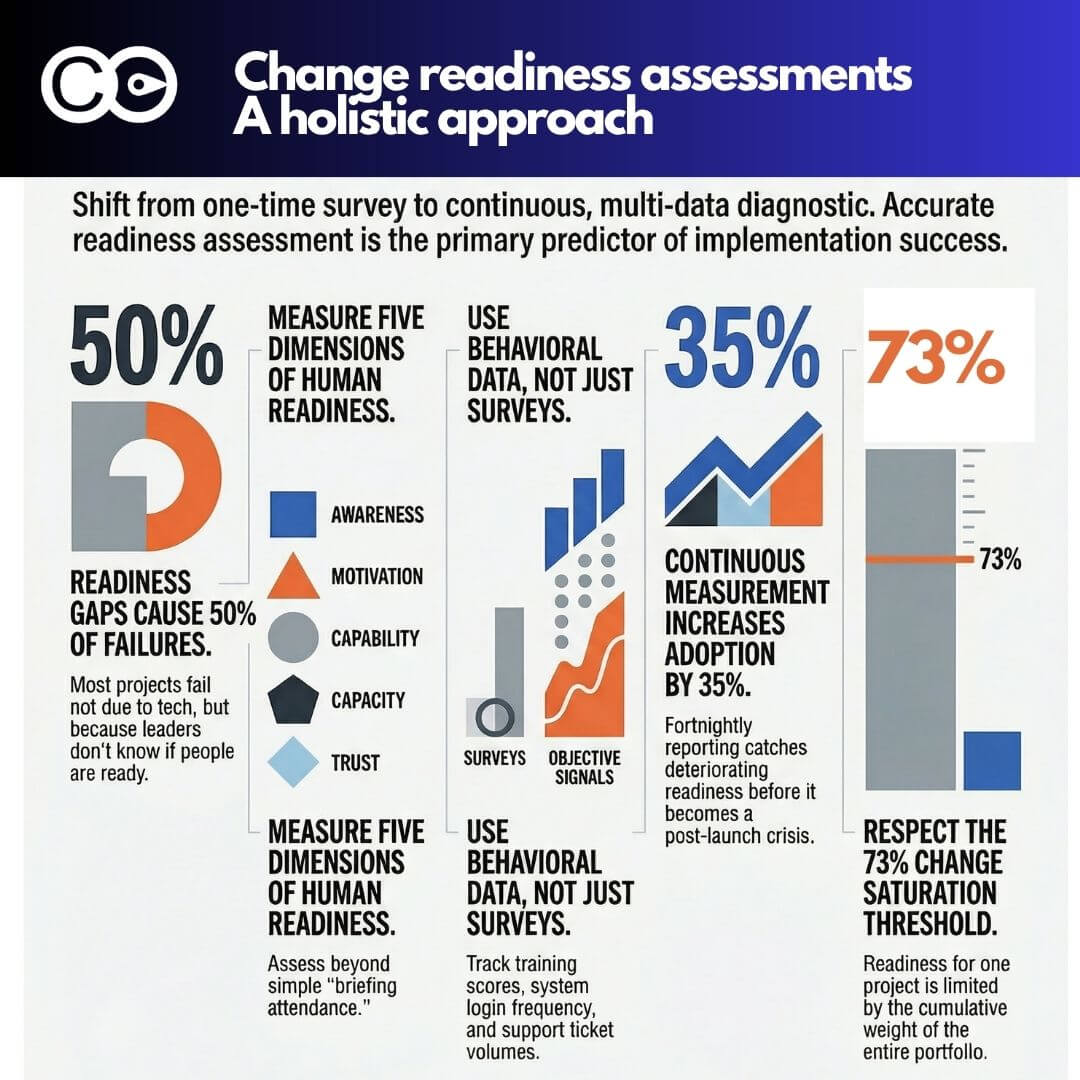

Change adoption is not a single moment. It is a journey from awareness through to sustained proficiency, and different metrics are relevant at different stages. Designing a dashboard without this lifecycle context leads to the most common design error: measuring adoption before people have had enough exposure to the change to actually adopt it.

The adoption lifecycle for most enterprise changes follows five stages: awareness, understanding, readiness, adoption, and proficiency.

Awareness is the entry point. Employees need to know the change is coming, what it involves at a high level, and why it is happening. Awareness metrics should be collected four to twelve weeks before go-live. Key indicators include communications reach (the percentage of the affected population who have received awareness communications), and awareness survey scores (the percentage who can correctly describe what is changing and when).

Understanding goes deeper than awareness. It measures whether employees understand what the change means specifically for their role: what they will need to do differently, when, and how they will be supported. Understanding metrics are typically collected two to six weeks before go-live. Key indicators include role-specific briefing attendance rates and understanding survey scores capturing role clarity confidence.

Readiness measures whether employees feel prepared to perform differently at go-live. This is the most critical pre-go-live measurement point and the one where intervention time is most valuable. Readiness metrics collected four to six weeks before go-live allow targeted support to be deployed where confidence is lowest. Key indicators include readiness survey scores by group and role, manager readiness assessments, and training completion rates against plan.

Adoption is the first post-go-live measure. It answers the question: are people using the new system, process, or way of working? Adoption metrics should begin being collected from the first week after go-live and tracked at regular intervals for the following three to six months. Key indicators vary by change type but typically include system usage rates (for technology changes), process adherence rates (for process changes), and manager-observed behaviour alignment (for cultural or behavioural changes).

Proficiency is the sustained adoption measure. It goes beyond whether people are using the new way of working to whether they are using it well: accurately, efficiently, and without reverting to old habits under pressure. Proficiency metrics become relevant from three months post-go-live and are typically used in benefits realisation reviews. Key indicators include quality and error rates, process cycle times compared to target, and exception rates where the old process or system is being used in parallel.

The core metrics for a change adoption dashboard

Translating the lifecycle above into a dashboard requires selecting the right metrics and presenting them in a format that is actionable. The following are the metrics that belong in an enterprise change adoption dashboard, organised by lifecycle stage.

Pre-go-live metrics

Communication reach rate: the percentage of the target population who have received and opened change communications, tracked by communication channel and stakeholder group. A reach rate below 70% in the four weeks before go-live is a significant warning sign.

Training completion rate: the percentage of employees who have completed required training as a proportion of the total enrolled population, tracked by group. Completion rates should be segmented by role type and location to identify where completions are lagging. A completion rate below 80% two weeks before go-live typically indicates a resourcing or scheduling problem that needs immediate escalation.

Readiness score by group: the average self-reported confidence score from readiness surveys, segmented by business unit, role, and geography. The dashboard should display both the current score and the trend from the previous survey cycle. Groups scoring below the predefined readiness threshold should be highlighted as requiring intervention.

Manager readiness index: a composite score measuring the degree to which line managers feel equipped to support their teams through the change. This is often the single most predictive pre-go-live indicator of post-go-live adoption success, and it is frequently under-measured.

Post-go-live adoption metrics

Adoption rate by group: the percentage of the target population actively using the new system, process, or way of working, segmented by business unit, role, and geography. For technology-enabled changes, this can be pulled directly from system usage analytics. For process changes, it requires direct observation or survey-based measurement.

Time to adoption: the time elapsed between go-live and each employee reaching a defined adoption threshold. Tracking the distribution of time to adoption by group allows the dashboard to identify which cohorts are lagging and where support needs to be concentrated.

Resistance indicators: quantified signals of active or passive resistance, including workaround usage rates (employees finding alternative ways to do tasks rather than using the new process or system), helpdesk ticket volumes related to the change, and manager-escalated concerns. A spike in resistance indicators in the first four weeks post-go-live often predicts below-target adoption at the three-month mark.

Benefits tracking progress: the percentage of expected benefits that are currently on track to be realised, measured against the programme business case. This metric links adoption data directly to the business value argument, which is what executive sponsors care most about.

Sustained proficiency metrics

Accuracy and error rate: for changes that affect operational processes or technology, the quality of outputs using the new way of working compared to target. An error rate that remains elevated three months post-go-live is a strong signal that proficiency support is needed.

Regression rate: the percentage of employees who initially adopted the new way of working but have since reverted to the old approach. Regression is often invisible without dedicated measurement, but it is one of the most common causes of benefits shortfall.

Embedding score: a composite metric capturing whether the change has become the standard way of working rather than an overlay on existing habits. Embedding is assessed through a combination of manager observation, peer review data, and process compliance audits.

Structuring the dashboard for different audiences

The most effective change adoption dashboards are not single views. They are layered presentations of the same underlying data, tailored to different audiences and different decision-making needs.

Programme governance dashboard (executive): designed for programme sponsors and steering committees. Should present adoption rate, readiness score, and benefits tracking progress as headline metrics, with a clear red/amber/green status indicator and a concise narrative on the key risks and decisions required. This view should be updatable in under two hours and presentable in under five minutes.

Business unit view (operational): designed for business unit managers and team leaders. Should present adoption and readiness data disaggregated to their specific group, with comparison to the programme-wide averages. The most valuable element for this audience is visibility of how their group is tracking relative to the rest of the organisation, which creates natural accountability.

Change management team view (delivery): designed for change practitioners managing the programme. Should present the full set of metrics across all lifecycle stages, with trend data, intervention history, and leading indicators. This is the working dashboard that drives day-to-day decisions about where to direct communication, coaching, and training activity.

For organisations managing multiple concurrent programmes, the portfolio-level view becomes critical. Change Compass provides a platform specifically designed for this challenge: aggregating adoption data across all programmes into a single portfolio view that allows change functions to see which programmes are embedding well, which are falling behind, and where cumulative adoption demand is creating saturation risk across the same stakeholder groups.

Building the data infrastructure behind the dashboard

A dashboard is only as reliable as the data feeding it. The most common reason change adoption dashboards fail in practice is not the design: it is the data infrastructure. Specific issues include inconsistent data collection across programmes (making aggregation impossible), manual data entry that is too time-consuming to maintain between reporting cycles, and survey instruments that are not standardised enough to allow trend comparisons.

Building a sustainable data infrastructure for a change adoption dashboard requires three things:

Standardised instruments across all programmes: every programme change impact assessment, readiness survey, and adoption tracking instrument must collect data in a consistent format. Even small variations in scale or question wording make trend analysis unreliable. This requires a central change function to set and enforce standards, not leave them to each programme team.

Automated data collection where possible: for technology-enabled changes, system usage data can be automatically extracted and fed into the dashboard without manual intervention. For survey-based metrics, lightweight pulse survey tools with automated scheduling significantly reduce the data collection burden.

A shared data platform: adoption data that sits in individual programme SharePoint folders or local spreadsheets cannot be aggregated or maintained over time. A shared platform with a structured data model is the difference between a dashboard that is updated once per quarter under duress and one that reflects the current state of the change portfolio at any given moment.

The Change Compass platform provides the data infrastructure and visualisation capability that makes enterprise-scale change adoption dashboards sustainable in practice. The Change Automator extends this with workflow automation that handles the routine data collection and update tasks that otherwise consume change team time.

Common design mistakes to avoid

Several predictable design errors undermine the value of change adoption dashboards in practice.

Measuring activity instead of adoption: including metrics like “number of communications sent” or “number of training modules completed” as headline figures in an executive dashboard confuses output with outcome. Activity metrics belong in the delivery team view, not in the governance dashboard.

Displaying data without a threshold or benchmark: a readiness score of 3.4 out of 5 means nothing without knowing what the target is and what the current score was last cycle. Every metric on a change adoption dashboard should have a defined threshold and a trend indicator.

Updating the dashboard too infrequently: monthly updates are almost always insufficient for a governance dashboard. The lag between data collection and reporting creates a situation where by the time a risk is flagged, the window for effective intervention has often already passed. The minimum viable cadence for most programmes is fortnightly for pre-go-live readiness metrics and weekly for the first month post-go-live.

Failing to link adoption to business value: the audience that controls change management resources and sequencing decisions is an executive audience that thinks in terms of business outcomes. A dashboard that cannot connect adoption performance to expected benefits realisation will always struggle to secure the attention and action it needs.

Making the dashboard drive action

The ultimate test of a change adoption dashboard is not how well it is designed. It is whether it changes what happens. A dashboard that produces beautifully formatted reports that are reviewed and filed without any change to programme decisions has no value.

The governance mechanism around the dashboard matters as much as the design. Every metrics review session should conclude with explicit decisions: which groups need additional support, what form that support will take, who is accountable for delivering it, and by when. The intervention log in the dashboard should record these decisions and track their completion.

Prosci research on change management KPIs consistently identifies that organisations actively measuring and acting on change data are significantly more likely to meet or exceed their project objectives. Measurement without action is just administration. The purpose of a change adoption dashboard is to make the right interventions happen at the right time.

From dashboard to decision: a practical starting point

For change functions starting from scratch, the path to a decision-ready change adoption dashboard does not require building everything at once. The practical starting point is selecting three to five metrics that are already collectible with current resources, establishing a defined threshold for each, setting a regular reporting cadence, and creating a standing agenda item in programme governance for the dashboard review.

Once that foundation is in place, additional metrics, automation, and portfolio-level aggregation can be layered in progressively. The organisations with the most effective change adoption dashboards today did not build them in a single programme cycle. They built them iteratively, driven by the decisions they needed to make and the data that could most reliably inform those decisions.

Frequently asked questions

What should be on a change adoption dashboard?

An effective change adoption dashboard should include metrics across the full adoption lifecycle: pre-go-live metrics covering communication reach, training completion, and readiness scores; post-go-live adoption metrics including adoption rate by group, time to adoption, and resistance indicators; and proficiency metrics covering accuracy rates, regression rates, and embedding scores. Each metric should have a defined threshold and a trend indicator against the previous reporting period.

How often should a change adoption dashboard be updated?

For programmes in active delivery, fortnightly is the minimum viable update cadence for pre-go-live readiness metrics. In the first four to six weeks after go-live, weekly updates are appropriate for adoption metrics, as this is the highest-risk period for early regression. Once adoption has stabilised above target, monthly updates for proficiency metrics are generally sufficient.

What is the difference between adoption rate and proficiency rate?

Adoption rate measures whether employees are using the new system, process, or way of working at all. Proficiency rate measures whether they are using it correctly and efficiently. Both are important, but they become relevant at different points in the change lifecycle. Adoption is the first post-go-live measure; proficiency becomes meaningful from three to six months post-go-live once the initial learning curve has passed.

How do you measure change adoption for a process change (not a system change)?

For process changes without a system to generate automatic usage data, adoption measurement relies on a combination of manager observation scorecards (structured assessments of whether teams are following the new process), quality and error rate data from process outputs, exception tracking (instances where the old process is being used instead of the new one), and periodic survey-based self-assessment. These methods are more resource-intensive than system analytics but are entirely viable with a clear measurement framework.

What is a good target adoption rate?

A benchmark adoption target of 70 to 90% of the target population actively using the new way of working is commonly used in enterprise change programmes, with 80% being a typical minimum threshold for declaring a change “embedded.” However, the right target depends on the nature of the change, the risk of non-adoption, and the degree to which proficiency is required for the intended benefits to be realised. Critical compliance or safety changes require higher adoption thresholds than optional process improvements.

References

- Prosci. (2024). Metrics for Measuring Change Management. https://www.prosci.com/blog/metrics-for-measuring-change-management

- Whatfix. (2024). 12 Change Management KPIs and Metrics to Track in Your Dashboard. https://whatfix.com/blog/change-management-kpis/

- Pipefy. (2024). 11 Change Management KPIs You Must Track in 2024. https://www.pipefy.com/blog/change-management-kpis/

- Prosci. (2024). The Correlation Between Change Management and Project Success. https://www.prosci.com/blog/the-correlation-between-change-management-and-project-success

- OCM Solution. (2024). Best Change Management Metrics and KPIs for Change Managers. https://www.ocmsolution.com/tracking-and-measurement/