Financial services transformation refers to the structured programmes through which banks, insurers, wealth managers and capital markets firms reshape how they deliver value, manage risk and operate at the front, middle and back office. It is not a single category of...

Latest Blogs •

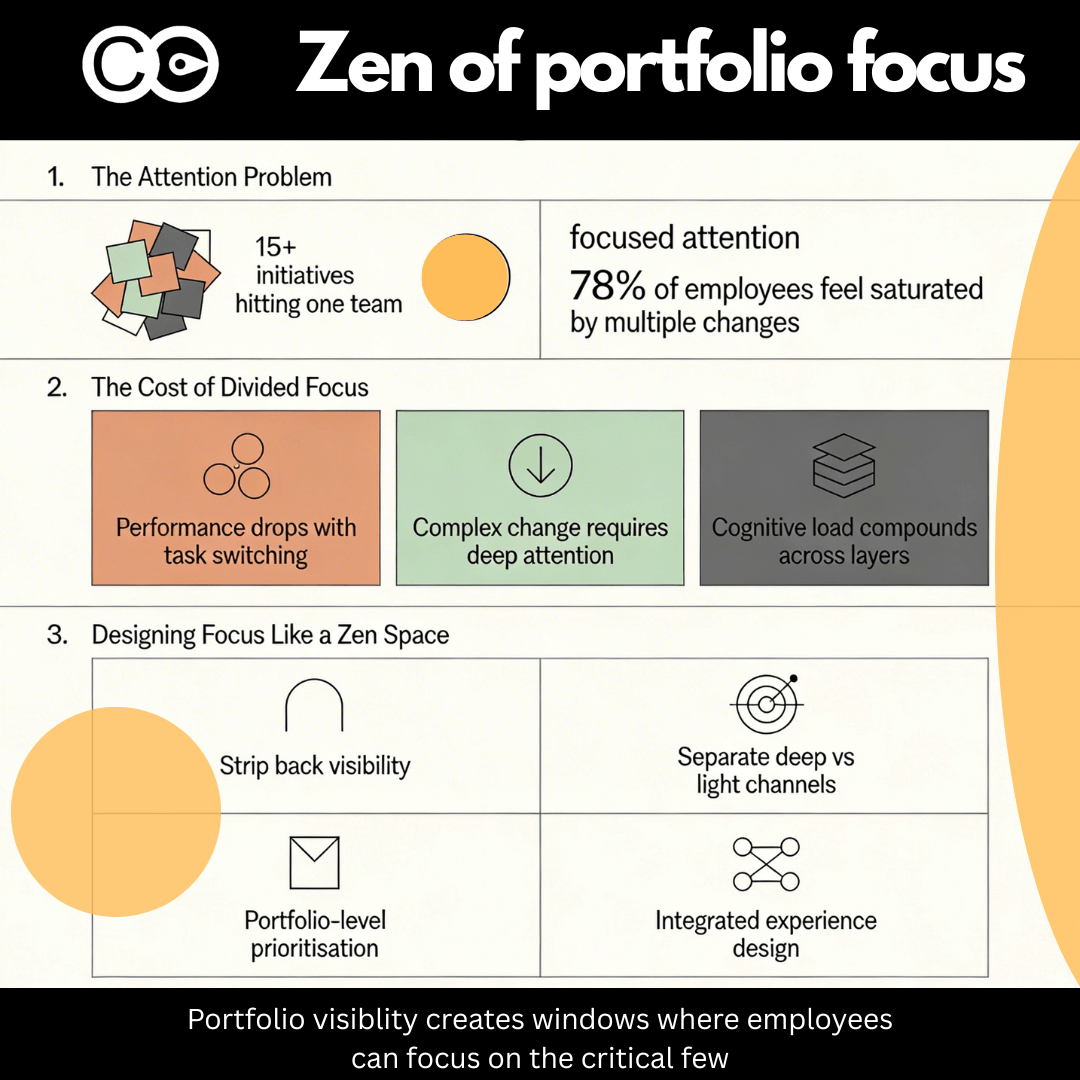

Designing Focus in a Noisy Change Portfolio: What Zen Minimalism Teaches Us About Employee Capacity

Most organisations now compete on how much change they can push through the system. Very few compete on how well they design focus. Travelling through Japan, visiting zen temples and the art islands of Teshima and Naoshima, I was struck by how intentional design...

Why peak productivity disruption happens 2 weeks after go-live

Most organisations anticipate disruption around go-live. That's when attention focuses on system stability, support readiness, and whether the new process flows will actually work. But the real crisis arrives 10 to 14 days later. Week two is when peak disruption hits....

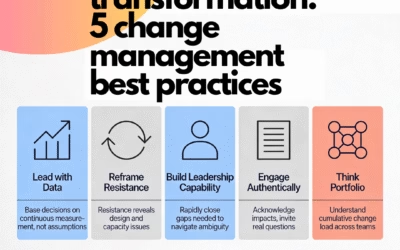

Managing change: Best practices for leading organisational transformation

Managing organisational change at scale is the systematic application of methodology, leadership behaviour and operational discipline to take large groups of people from current ways of working through to new, embedded ways of working. The best practices that...

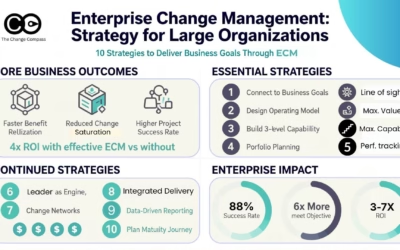

Enterprise Change Management: Strategy for Large Organizations

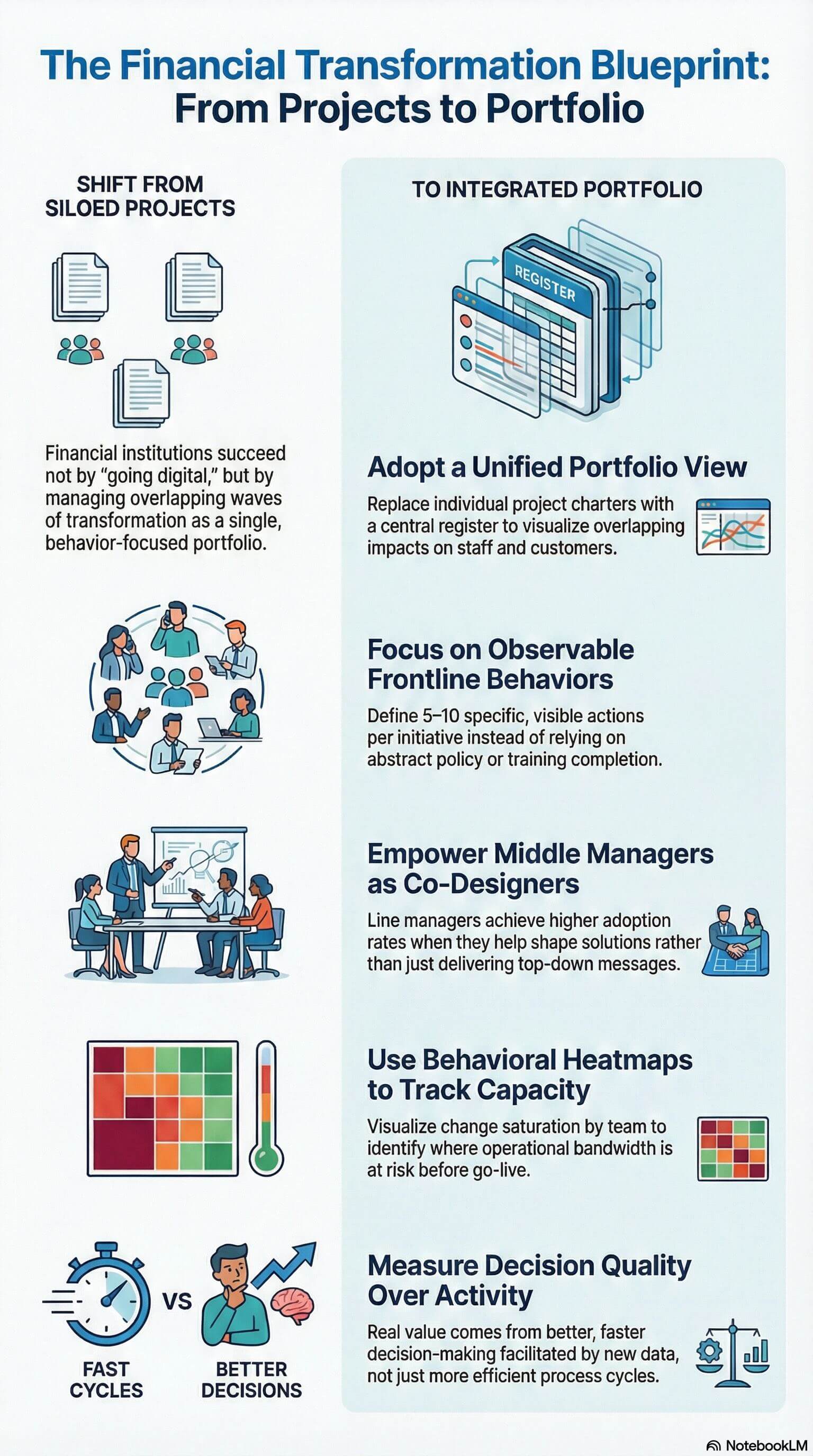

An enterprise change management strategy is the deliberate, multi-year plan that determines how an organisation will build and apply change capability at scale across every business unit, programme and transformation initiative. It covers the operating model...

Change Saturation: 73% of Organisations Are Already at the Breaking Point

Change saturation is the operational condition where the volume, pace and concurrency of initiatives demanded of an organisation's workforce exceeds the capacity those teams have to absorb new ways of working. Symptoms include declining adoption rates, missed go-live...

Enterprise change management frameworks and processes

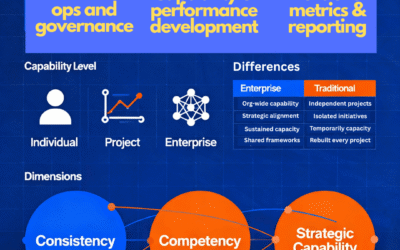

What is enterprise change management? Enterprise change management represents a fundamental evolution beyond traditional project-based change approaches. Rather than treating change as a series of isolated initiatives, enterprise change management (ECM) establishes...

The big difference between change management and enterprise change management

Understanding the real distinction between traditional, project-focused change management and the practice of enterprise change management (ECM) opens the door to a structured approach to genuine organisational agility and resilience. While project-based...

Rethinking Change Management – The “Light at the End of the Tunnel” Analogy

When navigating the complexities of organizational change, leaders often rely on analogies to communicate the journey and keep their teams motivated. One common analogy used in traditional change management is the “light at the end of the tunnel,” which portrays the...

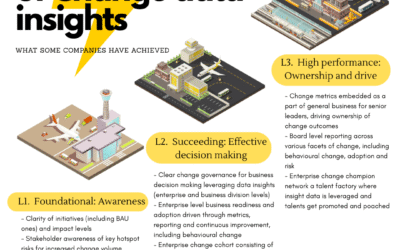

The Secret Powers of Change Data: How Insights Unlock Enterprise-Wide Transformation and Performance

Why Change Data is the Hidden Superpower Every Business Leader Needs The Untapped Potential of Change Data For years, many senior leaders have viewed organisational change as an art, a blend of communications, stakeholder engagement, and leadership sponsorship. While...

Measuring Change Adoption Across Multiple Initiatives

In today’s fast-paced business environment, most organizations are engaged in numerous change initiatives, including organizational transformation, simultaneously. These initiatives might range from digital transformation efforts to restructuring, new product...

The Enterprise Change Champion Model: How to Build Change Capability and Talent – Simultaneously

Rethinking Change Champions Beyond the Project Lens For decades, the change champion has been a familiar figure in large-scale transformation projects – the trusted liaison between the change team and the business, responsible for rallying colleagues,...