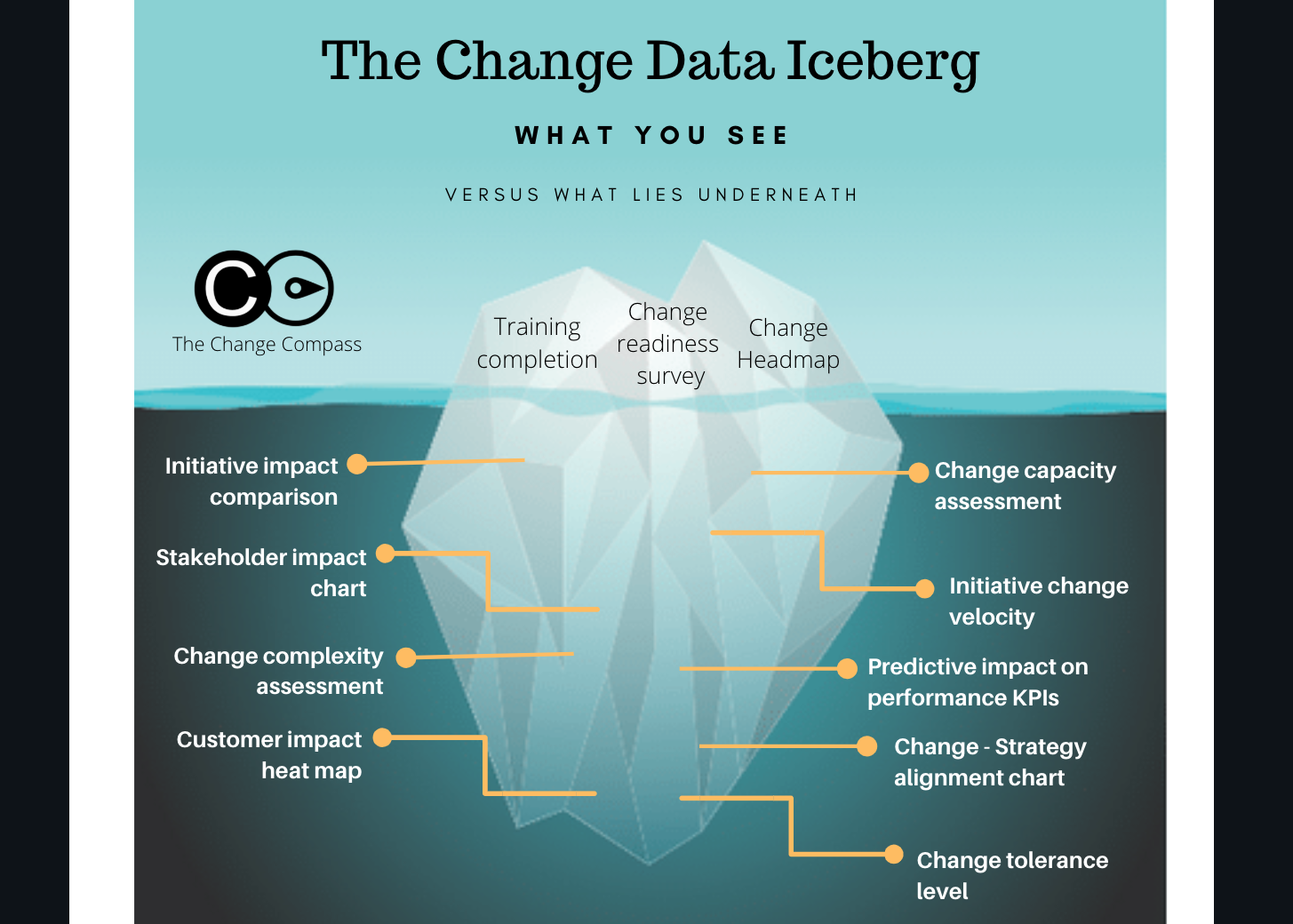

Most organisations that attempt to measure change management effectiveness measure the same narrow set of indicators: training completion rates, attendance at awareness sessions, results from pulse surveys, and the familiar heatmap showing which teams are affected by which programmes. These measures are not worthless. But they represent only the visible surface of what change data can actually tell you. Below the waterline lies a much richer dataset that most organisations have never systematically collected or analysed — and that contains the information actually needed to manage change at scale.

The change data iceberg is a useful way to visualise this gap. What sits above the surface is visible, easy to collect, and widely reported. What sits below is harder to surface, requires more deliberate effort to measure, and is substantially more predictive of change outcomes. Organisations that restrict their change measurement to surface-level indicators are managing their change portfolio with a partial view of reality. Those that invest in the deeper data layers develop a genuine predictive capability — the ability to identify where change is at risk before the symptoms become visible.

Download the Change Data Iceberg diagram

What sits above the waterline: visible change data

The change data that most organisations measure sits at the surface of the iceberg. These are the indicators that are easiest to collect, most familiar to stakeholders, and most often reported in programme status updates. They include training completion rates — the percentage of affected employees who have completed required training modules or attended scheduled sessions. They include attendance at change events: town halls, briefings, workshops. They include the outputs of awareness communications: email open rates, intranet page views, video completion rates. And they include change heatmaps — visual representations of which teams or roles are affected by which programmes at which points in time.

These surface-level indicators serve a legitimate purpose. They tell programme teams whether the basic mechanics of change delivery are functioning — whether people are showing up, whether communications are being received, whether the logistics of the training programme are on track. They provide a useful accountability layer for change delivery activity.

Their limitation is that they measure inputs and activities, not outcomes. A team can achieve 100 percent training completion and still not adopt the new process. Employees can attend every briefing session and still not understand how the change affects their role. Communications can reach every inbox and still not generate the comprehension and engagement that behavioural change requires. Surface-level change data tells you that the change programme did things. It does not tell you whether those things worked.

The middle layers: readiness and comprehension data

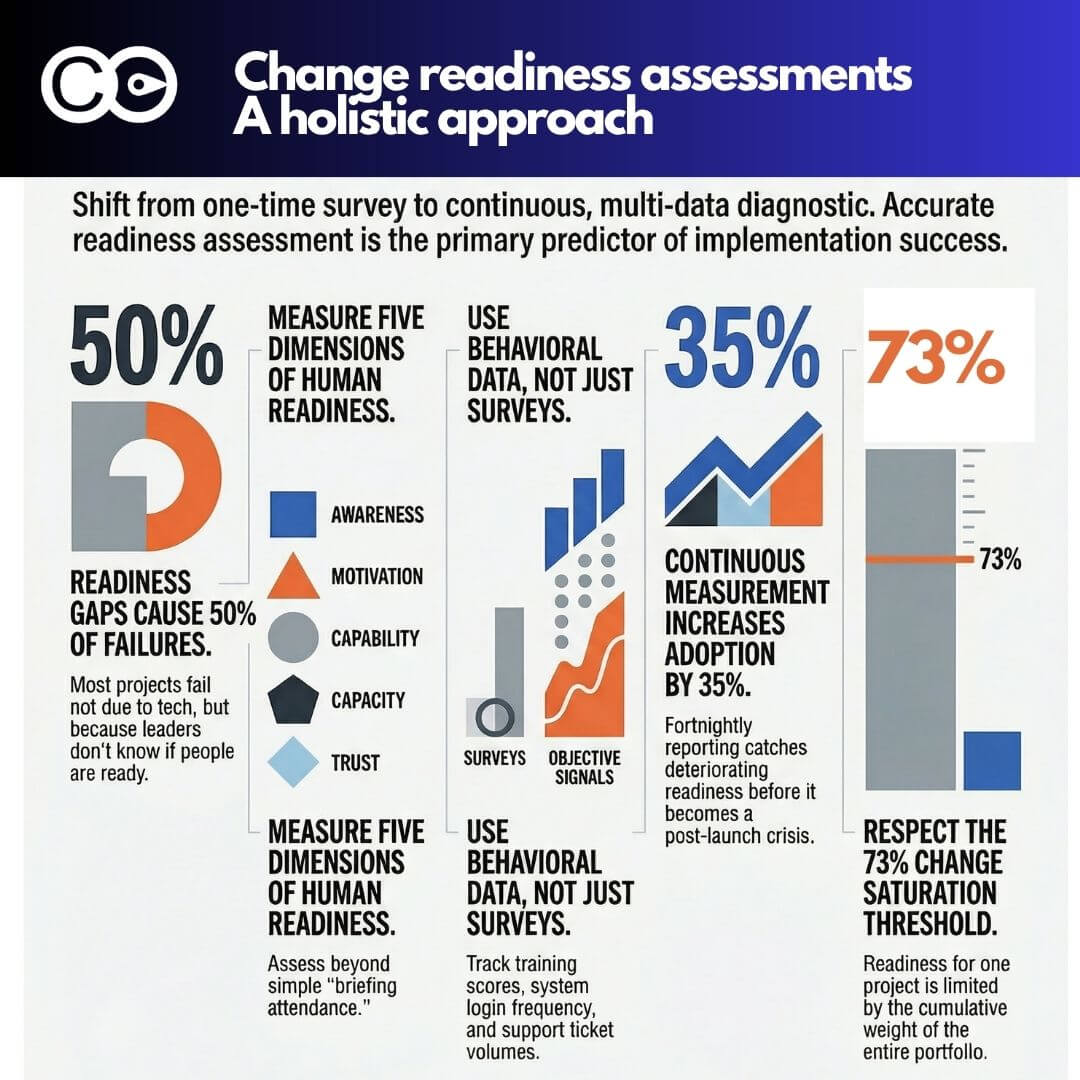

The first level below the surface of the iceberg contains data about employee readiness and comprehension — whether people understand what is changing, what it means for their role, and whether they feel equipped to perform in the new environment. This data is more difficult to collect than surface indicators because it requires asking people about their subjective state rather than recording objective activity data. But it is substantially more predictive of change outcomes.

Readiness data can be collected through structured pulse surveys aligned to change programme milestones, through facilitated team discussions with a consistent set of probing questions, or through manager-reported assessments that capture the team-level picture through the lens of the person best placed to observe it. The most useful readiness indicators ask about specific, concrete dimensions: does the employee understand what their role will look like after the change? Do they know where to go if they encounter problems during the transition? Do they feel the organisation has prepared them adequately for the new requirements?

Comprehension data is distinct from awareness data. Awareness means someone has received information about the change. Comprehension means they understand it well enough to act on it. The gap between the two is consistently underestimated by change teams who focus on information delivery rather than understanding verification. Prosci’s ADKAR model makes this distinction explicit: awareness and knowledge are separate stages, and organisations that conflate them systematically overestimate their change readiness.

Deeper layers: adoption and capability data

Further below the surface lies adoption data — evidence of whether employees are actually performing in the new way that the change requires. This is arguably the most important category of change data because it directly measures the outcome the organisation is trying to achieve. Yet it is among the least systematically collected, partly because it requires coordination between the change programme and the operational systems that can provide the relevant signals.

Adoption data takes different forms depending on the type of change. For a system implementation, it might include login rates, feature usage rates, and the number of workaround behaviours being observed (employees using the old system in parallel with the new one). For a process change, it might include error rates in the new process, the time taken to complete tasks under the new approach versus the old, and the frequency with which exceptions are being raised. For a structural reorganisation, it might include the degree to which new reporting lines are being respected in practice versus in name.

Below adoption data sits capability data — evidence of whether employees have genuinely developed the skills and knowledge needed to perform in the new environment at the required level of proficiency. Training completion tells you someone sat through a programme. Capability data tells you whether they can do the job. Assessment scores are one indicator, but the most reliable capability data comes from observed performance in real work contexts rather than training environments.

The deepest layer: change load and capacity data

At the deepest level of the iceberg sits data that most organisations do not collect at all: the aggregate change load on specific employee groups, measured across the entire change portfolio rather than within individual programmes. This is the data that reveals whether a team is being asked to absorb more change than its adaptive capacity can handle — and it is invisible to any measurement system that operates at the programme level.

Change load data requires a portfolio-level view. It involves aggregating the impacts of all concurrent programmes on a given team or role group and comparing that aggregate load against historical data or research-derived benchmarks for what constitutes sustainable change demand. Without this data, organisations routinely overload specific employee groups — inadvertently, because no one is looking at the cumulative picture.

Gartner research on change fatigue found that employees who experience high change fatigue are significantly less likely to intend to stay with their organisation and substantially less likely to successfully adopt change. The mechanism is straightforward: each change demands cognitive and emotional resources from the same finite pool. When that pool is depleted by simultaneous changes, employees enter a state of change fatigue where their capacity to absorb new demands is severely limited — and even well-designed, well-supported changes land poorly.

Measuring change load requires structured data collection about the nature, timing, and intensity of impacts associated with each programme, aggregated by team or role group across the portfolio. This is not a trivial undertaking, but it is what separates organisations with genuine change measurement maturity from those that are measuring activity and calling it measurement.

Why organisations stay at the surface

Given the predictive value of the deeper data layers, it is worth asking why most organisations restrict their change measurement to surface indicators. Several factors contribute. The first is convenience: surface data is easy to collect and exists within systems that change programmes already manage. Training platforms produce completion data automatically. Email systems produce open rate data. No additional investment or coordination is required.

The second factor is the programme incentive structure. Change programmes are typically resourced and governed to deliver activities rather than outcomes. When a change programme is judged on whether training was delivered on time and whether communications were sent, there is limited incentive to collect data that might reveal the activities were insufficient. Deeper change data creates accountability that surface data does not.

The third factor is the portfolio measurement gap. Even organisations that have invested in programme-level change measurement often lack the infrastructure to aggregate data across programmes. Impact assessments sit within individual programme documentation rather than in a shared data layer that allows portfolio-level analysis. Change load data requires a cross-programme view that no single programme team can produce unilaterally.

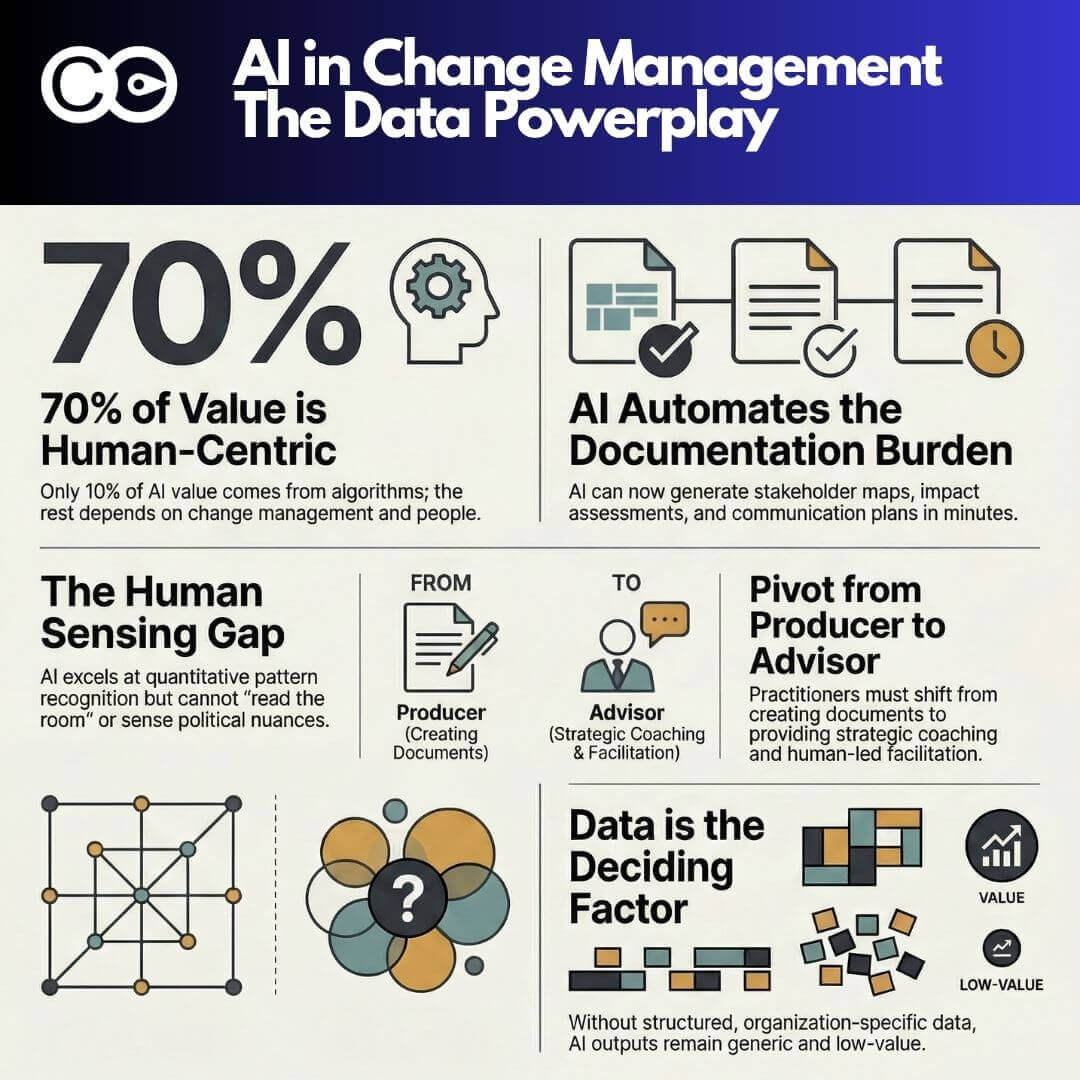

This is precisely the problem that change management platforms are designed to address. Tools like The Change Compass create a shared data infrastructure that aggregates change impact data across the portfolio, enabling the deeper measurement layers — change load, capacity, and cumulative impact by employee group — that are invisible to programme-level measurement systems. By making the full iceberg visible rather than just the surface, these platforms give change leaders and executives the data they need to make informed decisions about pacing, sequencing, and resourcing.

Building a change measurement framework

Moving from surface measurement to full-iceberg measurement is a progressive journey rather than a single investment. Organisations that attempt to implement comprehensive change measurement all at once typically struggle with data quality, stakeholder buy-in, and analytical capacity. A more effective approach is to build the measurement capability incrementally, starting with the surface indicators that already exist and adding deeper layers as capability and confidence develop.

The first step is to standardise the surface data that already exists. Many organisations collect training completion data, but the definitions vary across programmes — different standards for what counts as complete, different timeframes for reporting, different denominators for calculating rates. Standardising these basics creates a consistent baseline and builds the data governance habits that will be needed for deeper measurement.

The second step is to add structured readiness and comprehension measurement at key milestones. A consistent pulse survey deployed to affected employee groups at go-live and at 30- and 90-day post-implementation points provides early adoption data while the programme still has the resources and attention to respond to what the data reveals.

The third step is to connect change measurement to operational data. Adoption indicators that draw on system usage, process performance, or error rates provide a more objective picture than self-reported readiness data alone. This requires coordination between the change programme and the operational or IT teams that own the relevant data sources, but the resulting measurement is substantially more credible.

The fourth step is to establish portfolio-level change load tracking. This requires a consistent approach to impact assessment across all programmes — a shared taxonomy for categorising the nature and intensity of change impacts — and an aggregation mechanism that makes the cumulative picture visible to someone with the authority to act on it. Research on organisational decision-making quality consistently finds that the availability of comprehensive, timely data is the primary enabler of good portfolio-level decisions. Without it, the deepest drivers of change programme failure — change fatigue, inadequate capacity, accumulation effects — remain invisible until they manifest as resistance, attrition, or implementation failure.

Frequently asked questions

What is the change data iceberg?

The change data iceberg is a model for understanding the full range of data available to change management practitioners. The visible surface of the iceberg represents the data most organisations already collect: training completion rates, communication metrics, change heatmaps, and attendance data. Below the waterline lie richer data layers — readiness and comprehension data, adoption and capability indicators, and portfolio-level change load data — that are more predictive of change outcomes but require more deliberate investment to collect and analyse.

Why is training completion rate insufficient as a change measurement?

Training completion rate measures whether an employee attended or completed a training programme. It does not measure whether they understood the content, whether they can apply it in their role, or whether they have adopted the new process or behaviour the change requires. It is an input measure, not an outcome measure. Organisations that rely primarily on completion rates consistently overestimate their change readiness because they are measuring activity rather than the comprehension and capability that activity is intended to produce.

What is change load data and why does it matter?

Change load data is a measure of the aggregate change being experienced by a specific team or role group across all concurrent change programmes at a given point in time. It matters because individual employees have finite adaptive capacity, and when the cumulative demand from multiple simultaneous changes exceeds that capacity, even well-designed changes land poorly. Change load data is only visible at the portfolio level — no single programme can produce it, because each programme only sees its own impacts. Organisations that lack portfolio-level change load data routinely overload specific employee groups without realising it.

How can organisations start measuring deeper change data?

The most practical starting point is to standardise existing surface measurements to create a consistent baseline, then add structured readiness pulse surveys at key programme milestones. From there, organisations can progressively add operational adoption indicators by connecting change measurement to system usage and process performance data. Portfolio-level change load tracking requires a shared data infrastructure across programmes, which is most effectively supported by a dedicated change management platform that aggregates impact data across the portfolio.

References

- Prosci, “ADKAR: A Model for Change in Business, Government and Our Community”

- Gartner, “This New Strategy Could Be Your Ticket to Change Management Success”

- Harvard Business Review, “The Elements of Good Judgment”