Change readiness assessment: a step-by-step guide for change managers

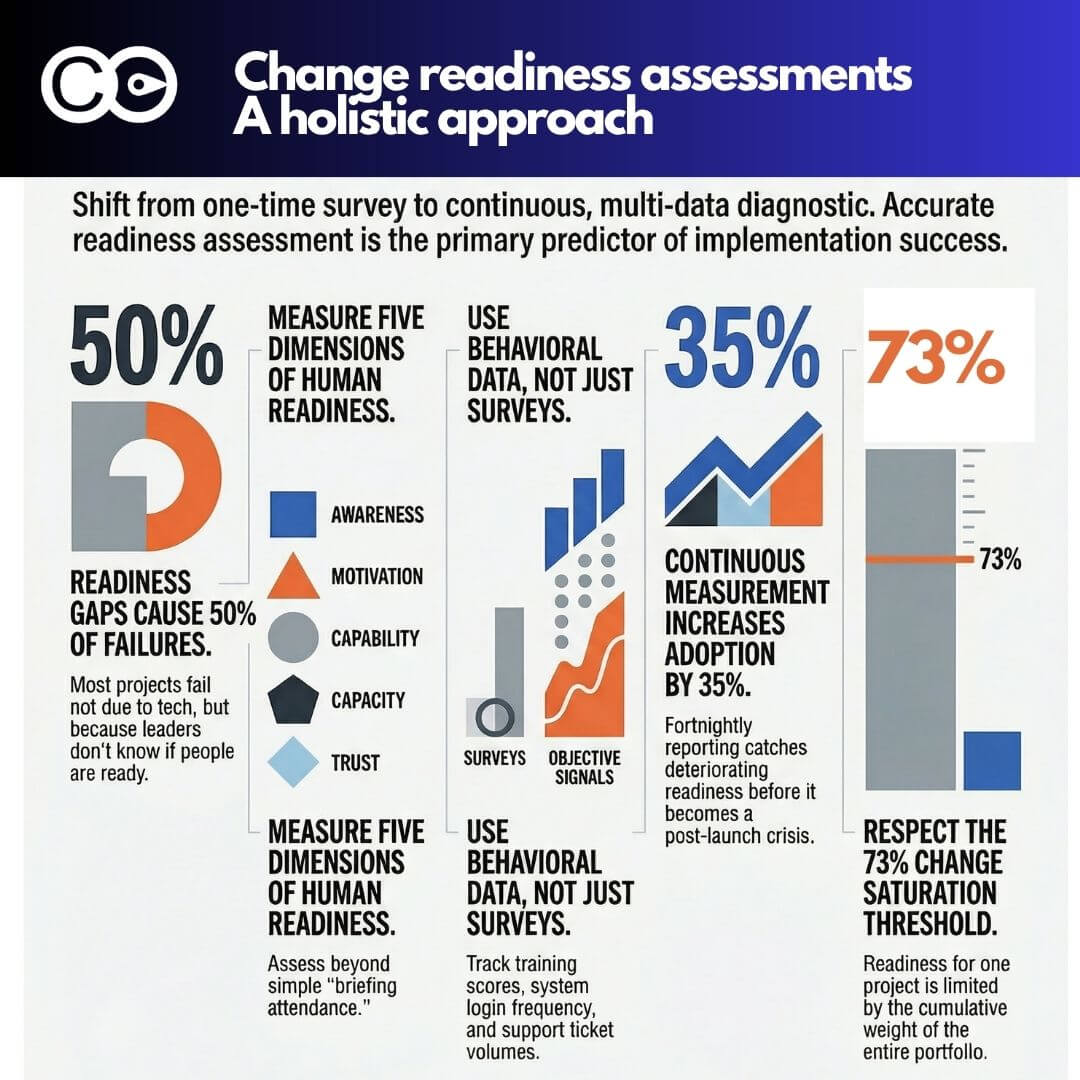

Research from public health implementation science has found that failure to accurately assess readiness accounts for around half of all implementation failures. Half. Not communication gaps, not technology issues, not sponsor disengagement. Simply not knowing whether people were actually ready before the change went live.

That number should give every change manager pause. Because the most common readiness tool in most organisations is a pre-launch survey, sent three weeks before go-live, with a 40% response rate and results that confirm what the project team already suspected. That is not readiness assessment. That is a post-rationalisation exercise.

Genuine change readiness is a dynamic, multi-dimensional condition. It reflects whether employees have the awareness, motivation, capability, and psychological bandwidth to adopt a specific change right now, not just whether they attended a briefing session and ticked a box. And critically, it is shaped not only by their attitudes toward your particular initiative, but by everything else being asked of them simultaneously across the entire change portfolio.

This guide sets out a practical, evidence-based approach to change readiness assessment: one that goes well beyond the survey, incorporates behavioural and system data, uses AI to accelerate synthesis, and accounts for the cumulative weight of change that real employees actually carry.

Why readiness is the real precursor to adoption

There is a persistent assumption in change management circles that adoption follows awareness. Build enough awareness, communicate clearly, and people will eventually adopt. The evidence does not support this. Prosci’s longitudinal research, drawing on more than 8,000 data points from organisations globally, shows that initiatives with excellent change management are six times more likely to meet their objectives than those with poor change management, and that the jump from awareness to actual behavioural change is where most programmes falter.

The ADKAR model is instructive here. Awareness and Desire are prerequisites for Knowledge, but Knowledge does not automatically produce Ability. People can understand exactly what a change requires and still be unable or unwilling to do it. Readiness sits squarely in that gap between knowing and doing. It is the accumulated condition of a person at a specific point in time: their confidence, their capacity, their trust in leadership, their workload, and their sense of whether this change is worth the effort it demands.

The Prosci research is unambiguous: when change management is applied with excellence, approximately 80% of projects meet or exceed their objectives. With poor or absent change management, that figure drops to 14%. The readiness assessment is your early-warning mechanism for which trajectory you are on.

What makes readiness especially critical is its predictive value. A readiness gap identified six weeks before go-live is actionable. The same gap identified two weeks post-launch is a crisis. Organisations that conduct continuous readiness measurement, rather than a single pre-launch snapshot, achieve 25–35% higher adoption rates than those relying on one-time assessment. Readiness is not a checkbox on a project plan. It is a continuous diagnostic.

The problem with survey-only readiness assessment

Surveys are useful. They are scalable, they are comparable over time, and when designed well they can surface genuine sentiment. But as the sole readiness instrument, they have serious limitations that most organisations overlook.

First, surveys measure declared intent, not demonstrated behaviour. A person can respond positively to “I feel confident using the new system” and still default to the old process when the pressure is on. The intention-behaviour gap is well documented in psychology: what people say they will do and what they actually do are often quite different, particularly in high-pressure or ambiguous environments.

Second, surveys are a lagging signal. By the time results are collated and reported, the organisation has moved on. Conditions change fast, particularly when multiple initiatives are running concurrently and team-level dynamics shift week by week.

Third, response rates and response bias skew the picture. Those most likely to respond to a readiness survey are often those with the strongest views: either enthusiastic adopters who inflate the readiness score, or disengaged resistors who depress it. The large silent middle, whose readiness is often the critical variable, is systematically underrepresented.

Finally, surveys can tell you that a readiness gap exists but rarely why it exists. Knowing that 42% of respondents feel “not confident” with the new process is interesting. Understanding whether that is driven by inadequate training, distrust of leadership, competing priorities, or unclear role expectations requires a different kind of data entirely.

A multi-method framework for change readiness assessment

Robust readiness assessment treats the survey as one of several data sources, not the primary one. The framework below sets out a step-by-step approach that change managers can apply to any initiative, from a technology rollout to a structural reorganisation.

Step 1: define the readiness dimensions for your specific change

Before deploying any assessment method, clarify what readiness actually means for this change. Generic readiness scales are rarely sufficient. An ERP implementation demands different readiness than a culture change programme. For each initiative, identify the specific dimensions you need to assess. These typically include:

- Awareness: Do people understand what is changing, why, and what it means for their role?

- Motivation: Do people see a personal benefit or at least a compelling reason to engage?

- Capability: Do people have the skills, knowledge, and tools required to operate in the new way?

- Capacity: Do people have the time and bandwidth to absorb this change given their current workload?

- Trust and confidence: Do people trust that the change is being well-managed and that leadership is genuinely committed?

This scoping step prevents you from measuring the wrong things and ensures your assessment data connects directly to actionable interventions.

Step 2: use surveys as one signal, not the signal

Design your readiness survey around the specific dimensions you identified in Step 1, not a generic template. Keep it short (eight to twelve questions maximum), include at least two open-text questions to surface qualitative nuance, and run it at multiple points rather than once. Segment results by team, location, role, and manager, because aggregate scores mask the local variation that drives or blocks adoption.

Critically, build in a follow-up protocol for low-readiness scores. A survey that identifies a problem but triggers no response is worse than no survey at all: it signals to employees that their concerns were collected and ignored.

Step 3: gather behavioural and system data

This is where most change readiness assessments have a blind spot, and where the most honest picture of readiness lives. Behavioural and system data reflects what people are actually doing rather than what they say they will do.

Depending on your change, this data might include:

- Training completion rates and assessment scores: Not just whether people attended, but how they performed. Low scores in required competency modules are a direct readiness signal.

- System adoption data: Login frequency, feature utilisation, process completion rates, and error rates in new systems. These are real behavioural readiness indicators that most organisations already collect but rarely route to change teams.

- Help desk and support ticket volumes: Spikes in support requests after go-live indicate either inadequate readiness or inadequate training design. Tracking ticket categories reveals exactly where readiness gaps are concentrated.

- Process compliance data: Are people following the new process or reverting to old workarounds? Audit trails in systems like CRM, ERP, or workflow tools can reveal this directly.

- Attendance and participation in change activities: Who is attending information sessions, completing pre-work, or engaging with change networks? Absence from these touchpoints is a passive readiness signal.

The discipline here is routing this data to change managers in near-real time, rather than leaving it siloed in IT systems or HR platforms where it is never seen through a readiness lens.

Step 4: conduct manager sensing and pulse reporting

Frontline and middle managers see readiness in ways that no survey can capture. They hear the informal conversations, notice who is quietly resistant, observe who needs extra support, and understand the team-level dynamics that shape how change lands.

Structured manager sensing involves regular (typically fortnightly) brief check-ins where managers report on a small number of consistent indicators: team sentiment, specific concerns raised, any behavioural changes in response to the upcoming change, and their own confidence in supporting the transition. This data should be structured enough to aggregate and compare across the organisation, but lightweight enough that managers will actually complete it.

Some organisations go further, using pulse tools that ask managers to rate team readiness across two or three dimensions on a simple scale, providing a running heatmap of readiness by team and location. This kind of continuous sensing is far more valuable than a single pre-launch survey, because it catches deteriorating readiness before it becomes an adoption problem.

Step 5: run diagnostic workshops and focus groups

Workshops serve a function that no quantitative method can replicate: they allow you to probe, test assumptions, and hear the reasoning behind attitudes. A well-facilitated readiness workshop with a cross-section of impacted employees will surface the specific concerns, misconceptions, capability gaps, and workload pressures that are shaping readiness in that part of the organisation.

Structured focus groups, particularly with sceptics or resistors, are especially valuable. These conversations often reveal systemic issues that no survey would capture: a lack of trust in a specific leader, a process design flaw that makes the new way harder than the old way, or a team-specific constraint that the broader programme has failed to account for.

Readiness workshops also serve a secondary purpose: they are themselves a readiness-building intervention. When employees feel heard, when their concerns are taken seriously and addressed directly, their readiness to engage with the change typically improves.

Step 6: synthesise signals into a dynamic readiness picture

The final step is the one most organisations skip. Gathering data from five different sources is useful only if that data is brought together into a coherent, interpretable picture of readiness at the group level and across the initiative’s lifecycle.

A readiness synthesis should map across the dimensions you defined in Step 1, draw on all your data sources, and be updated at meaningful intervals (typically fortnightly during an active change period). It should identify which groups are ready, which are borderline, and which are at risk, along with a clear articulation of the specific readiness gaps driving each risk rating. That synthesis is the document your sponsor and project team should be reviewing at every steering committee meeting.

The cumulative change problem: how your portfolio shapes readiness for any single initiative

Here is the readiness problem that change management programmes most consistently underestimate: the readiness of your people for this change is not determined solely by this change. It is shaped by everything else they are being asked to absorb simultaneously.

Research consistently shows that 73% of organisations are at or near their change saturation point: the threshold where concurrent initiatives overwhelm staff capacity and the ability to absorb any individual change, regardless of its quality, diminishes sharply. And the consequences are significant. Among employees experiencing high change fatigue, 54% are actively looking for new roles, compared to just 26% of those experiencing low fatigue, a retention gap of nearly 30 percentage points that is directly attributable to change overload.

The implication for change readiness assessment is significant. You cannot assess readiness for your ERP implementation without accounting for the fact that the same people are simultaneously navigating a restructure, a new performance management system, and an office relocation. Each of those initiatives consumes cognitive and emotional bandwidth. Each creates its own uncertainty and anxiety. And each reduces the available capacity for your initiative.

A change manager who assesses readiness in isolation from the broader change portfolio is working with an incomplete picture. They may diagnose low readiness for their initiative when the real issue is systemic change saturation: people who are fundamentally willing to adopt the new system but who simply do not have the bandwidth to engage with yet another change right now.

This demands a portfolio-level view of readiness. Organisations need to understand not just whether people are ready for a specific change, but what the cumulative change load looks like from the employee’s perspective, and how that load is distributed across different teams and roles.

Viewing readiness through the employee lens across the full change landscape

The most useful shift in perspective for any change readiness assessment is to move from the initiative view to the employee view. Instead of asking “are people ready for this change?”, ask “what does the full change picture look like for someone in this role right now, and does that picture leave them with the capacity and motivation to adopt this particular change?”

This means mapping the full set of changes affecting each impacted group, assessing the cumulative impact and demand on their time, and using that as the baseline against which you interpret readiness data for any individual initiative. A team that shows moderate readiness for your project but is simultaneously navigating three other significant changes is in a fundamentally different situation from a team with the same readiness score but minimal other change exposure.

The employee-centric view of readiness also reveals sequencing opportunities that an initiative-by-initiative assessment misses. If two high-impact changes are arriving at the same time for the same group of people, that is not a readiness problem, it is a scheduling problem, and the right intervention is timing adjustment rather than more training.

Organisations that adopt this perspective tend to make materially better decisions about go-live timing, phasing, and the allocation of change management resources. Rather than deploying equal effort across all initiatives regardless of context, they concentrate support where the cumulative load is highest and where readiness gaps are most pronounced.

How AI is transforming readiness intelligence

The traditional barriers to multi-method readiness assessment have been time and synthesis capacity. Gathering data from five sources, segmenting it by group, and producing a coherent readiness picture every two weeks was genuinely burdensome for most change teams. AI is materially changing this equation.

Large language models and AI-assisted analytics tools can now process qualitative survey responses at scale, automatically coding open-text comments by theme and sentiment, identifying patterns that would take a human analyst days to surface. A free-text comment from 400 survey respondents can be synthesised in minutes, with the most common concerns ranked, the strongest language flagged, and the themes segmented by business unit.

Predictive analytics applied to system and behavioural data can generate early-warning signals before readiness problems become visible to the naked eye. Drops in training assessment scores, spikes in specific support ticket categories, or declining engagement with change communications can all be weighted and combined into a predictive readiness score that alerts the change manager before go-live.

Natural language processing applied to collaboration platforms, such as workplace chat or internal forums, can provide a passive sentiment signal that reflects how employees are actually talking about a change in their day-to-day interactions, a very different and often more candid data source than anything they would submit in a formal survey.

The 2024 State of AI Change Readiness research by Microsoft found that the biggest barrier to AI adoption within organisations is not technological, it is leadership, and specifically the gap between leader confidence and actual employee readiness. That finding applies equally to AI-assisted readiness tools: the technology is available, but change teams need to actively embed it into their practice rather than waiting for it to arrive pre-packaged.

AI does not replace the human judgment required to interpret readiness data and design appropriate interventions. But it does dramatically accelerate the data collection, synthesis, and signal-detection work that currently consumes the majority of a change manager’s analytical time.

Using digital tools to maintain a dynamic view of readiness

For organisations managing multiple initiatives simultaneously, maintaining a dynamic, portfolio-level view of readiness requires more than spreadsheets and periodic reports. Digital change management platforms like Change Compass are specifically designed to provide this visibility, allowing change managers to track readiness indicators across multiple initiatives, overlay cumulative change impact data, and view readiness from the employee-centric perspective rather than the initiative view. The platform’s ability to aggregate multiple data inputs and present a real-time change load picture across the organisation makes the kind of portfolio-level readiness analysis described in this article genuinely scalable, rather than something that only the best-resourced programmes can afford to do.

Conclusion

Change readiness assessment is not a compliance activity or a project milestone to tick off. It is the most important predictive mechanism a change manager has, and the quality of that assessment is what separates teams that catch adoption problems early from teams that spend the post-launch period firefighting.

The shift required is from single-method, point-in-time assessment to a multi-method, continuous, employee-centric approach that accounts for the full change landscape people are navigating. That means triangulating surveys with system data, manager reports, behavioural signals, and workshop diagnostics. It means maintaining a portfolio-level view of cumulative change load. And it means using AI to accelerate synthesis so that readiness intelligence is available when it is needed, not three weeks after the window for intervention has closed.

Start with your current initiative. Define the readiness dimensions that matter. Map all five data collection methods. Build the portfolio-level overlay. That is the step from readiness assessment as a ritual to readiness assessment as a genuine strategic tool.

Frequently asked questions

What is a change readiness assessment?

A change readiness assessment is a structured process for evaluating whether employees and the organisation as a whole are prepared to adopt a specific change. It examines dimensions including awareness, motivation, capability, capacity, and trust. Effective assessments use multiple data sources, not just surveys, to build an accurate and actionable picture.

How is change readiness different from change adoption?

Readiness is a precondition for adoption: it describes the state of preparedness before and during a change, while adoption describes the demonstrated behavioural change that results from successful implementation. You assess readiness to predict and influence adoption outcomes. Low readiness, if unaddressed, reliably produces low adoption.

How often should change readiness be assessed?

Research indicates that organisations using continuous measurement rather than single-point assessments achieve significantly higher adoption rates. For active change initiatives, readiness should be assessed at meaningful intervals throughout the implementation lifecycle, typically fortnightly, with rapid-signal methods (manager sensing, system data) running continuously in between.

What is change saturation and how does it affect readiness?

Change saturation occurs when the cumulative volume of concurrent changes exceeds an organisation’s capacity to absorb them. Research indicates 73% of organisations are already at or near this threshold. Saturation directly undermines readiness for any individual initiative by consuming the cognitive and emotional bandwidth employees need to engage with and adopt a specific change.

How can AI support change readiness assessment?

AI can significantly accelerate readiness data collection and synthesis. Applications include automated thematic analysis of open-text survey responses, predictive analytics applied to system usage and support ticket data, passive sentiment analysis from internal collaboration platforms, and real-time dashboards that aggregate multiple readiness signals into an interpretable summary for change managers and sponsors.

References

- Failure to accurately assess readiness accounts for around half of implementation failures — PMC (2024)

- Prosci Best Practices in Change Management (12th Edition) — Prosci

- The Prosci ADKAR Model — Prosci

- Managing Change Saturation: How to Prevent Initiative Fatigue and Portfolio Failure — The Change Compass

- Organisational Readiness for Change: A Multi-Dimensional Framework — ResearchGate (2024)

- A typology of organisational readiness for change based on a latent profile analysis — Frontiers in Psychology (2024)

- Navigating readiness for change: exploring the influence of authentic leadership, culture, learning and internal locus of control — Taylor & Francis (2024)

- State of AI Change Readiness eBook — Microsoft (2024)

- Deloitte 2023 Global Human Capital Trends

- Reconfiguring work: Change management in the age of gen AI — McKinsey (2024)