Most change functions have opinions. The best ones have data.

There is nothing wrong with experienced judgement in change management. A seasoned practitioner who has run fifty programmes develops pattern recognition that is genuinely valuable. But experienced judgement on its own has a ceiling. It cannot scale across a portfolio of twenty concurrent initiatives. It cannot identify that three separate programmes are about to converge on the same group of employees in the same six-week window. And it cannot make a credible case to a CFO for additional resourcing unless it can translate human risk into numbers.

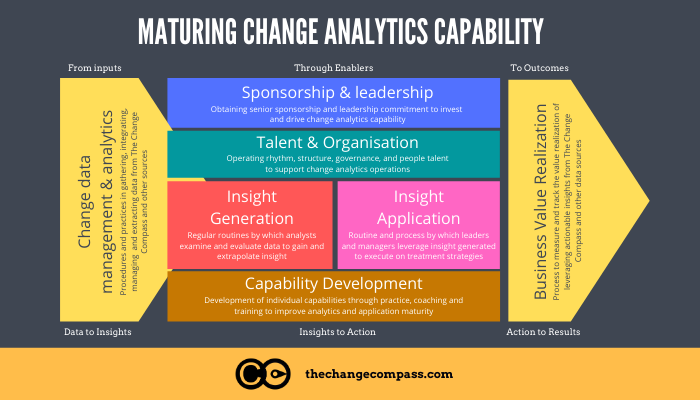

That is what change analytics capability provides. Not just dashboards and reports, but the organisational capacity to turn change data into decisions , decisions about which programmes need more support, which stakeholder groups are at risk of saturation, where adoption is lagging behind plan, and how to allocate scarce change management resources across a complex portfolio.

This article sets out what change analytics capability looks like in 2026, how to build it systematically, and what separates organisations that get genuine value from their change data from those that produce reports nobody acts on.

Why change analytics capability matters more now than ever

The analytics landscape has shifted profoundly in the past three years. Gartner’s 2025 data and analytics trend research identifies agentic AI and decision intelligence as the defining themes, with organisations moving beyond simply collecting data toward using it to make better decisions faster. Sixty-one percent of organisations are already evolving their data and analytics operating model because of AI’s impact.

This shift matters for change functions because it raises the bar on what “data-driven” means. Organisations that are building sophisticated decision intelligence capabilities in their commercial and operational functions are going to expect the same rigour from their change management function. A heat map with green-amber-red cells and no underlying methodology will not hold up in that environment.

The second driver is the compounding complexity of the change portfolio. Gartner research on change fatigue documented that employees experienced an average of ten simultaneous enterprise changes in 2022. Managing that volume without portfolio-level analytics is like running a hospital without patient records , theoretically possible, but not something you would design intentionally.

The third driver is accountability. Change management has historically struggled to demonstrate its own value in quantitative terms. Analytics capability provides the mechanism to do this: adoption rates by group, time to proficiency, correlation between change management investment and delivery outcomes. These are not vanity metrics , they are the evidence base for sustainable resourcing of the change function.

The four components of change analytics capability

Change analytics capability is not a single tool or a single role. It is an organisational capability with four interdependent components.

Component 1: Data infrastructure

You cannot analyse what you cannot collect. The data infrastructure component covers what change data is collected, in what format, at what frequency, and from what sources.

Common change data sources include:

- Change impact assessment data (groups affected, severity, change dimensions)

- Programme timeline and milestone data

- Stakeholder readiness survey results

- Adoption metrics (system usage, process completion, self-reported confidence)

- Sentiment data (pulse surveys, manager feedback, support ticket categories)

- HR data (headcount, location, reporting lines) for population segmentation

The critical design question is whether this data lives in connected systems or disconnected spreadsheets. A change function that manually compiles data from twelve different programme SharePoint sites every fortnight does not have analytics capability , it has a reporting overhead.

Component 2: Analytical methodology

Data infrastructure provides the raw material. Analytical methodology determines what you do with it. This component covers the frameworks and calculations used to turn raw data into meaningful signals.

Key analytical methodologies for change functions include:

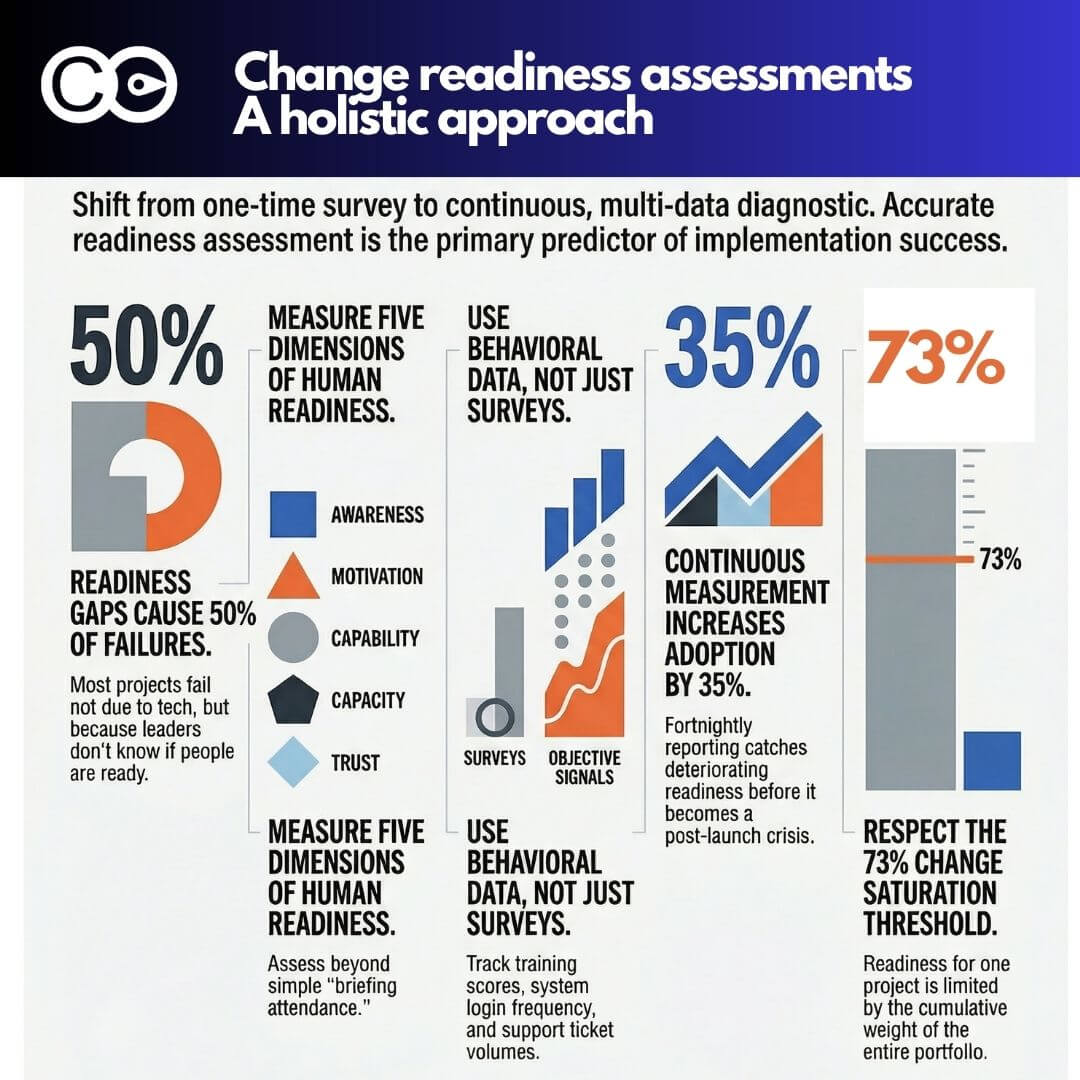

Change load analysis: Calculating the total volume of change being asked of each stakeholder group at any point in time, accounting for all concurrent initiatives. This is the foundation of change saturation management.

Adoption trajectory modelling: Tracking adoption rates against predicted curves to identify groups that are falling behind and require targeted intervention.

Readiness gap analysis: Comparing assessed readiness levels against readiness thresholds required for successful go-live, enabling proactive resourcing decisions before problems materialise.

Impact correlation analysis: Examining the relationship between change impact scores and adoption outcomes to sharpen future impact assessment methodology.

Component 3: Reporting and visualisation

Analytics that cannot be communicated are analytics that do not get acted on. Reporting and visualisation capability covers how change insights are packaged for different audiences.

Executive audiences need portfolio-level summaries: which programmes are on track for adoption, which groups are at risk, what the cumulative change load looks like across the organisation. They need this information in a format that takes two minutes to consume, not twenty.

Programme teams need operational detail: adoption metrics by group, readiness gap analysis by role, intervention recommendations for the next sprint cycle.

Business unit leaders need a group-specific view: what is landing on their people, when, and how that compares to their team’s current capacity.

The mistake many change functions make is producing one standardised report for all audiences. A skilled change analyst designs the visualisation to match the decision the audience needs to make.

Component 4: Decision-making integration

This is the component that separates analytics capability from analytics activity. Decision-making integration refers to whether change data is actually used to make portfolio and programme decisions, or whether it sits in a report that is acknowledged and filed.

The indicators of genuine decision-making integration include:

- Change analytics data is included as a standard agenda item in programme steering committee meetings

- Portfolio-level change load data informs programme sequencing and go-live scheduling decisions

- Adoption metrics trigger formal review and response when they fall below thresholds

- Change analytics are referenced in investment cases for change management resourcing

Without this integration, even excellent analytics capability produces limited value.

Building the capability: a five-stage maturity model

Change analytics capability does not appear fully formed. It develops in stages, and most organisations are at stage one or two.

Stage 1: Baseline awareness Change data exists in individual programme documents. Impact assessments are completed per programme. No aggregation or portfolio view. Analytics capability = zero.

Stage 2: Programme-level reporting Individual programmes track and report adoption metrics. Change managers produce reports for their specific programme stakeholders. No cross-programme view. Analytics capability = low.

Stage 3: Portfolio aggregation Change data is collected in a consistent format across programmes and aggregated into a portfolio view. The change function can report on cumulative change load and cross-portfolio adoption status. Analytics capability = moderate.

Stage 4: Predictive analytics Historical adoption data informs predictions for new programmes. Readiness gap analysis drives proactive resourcing decisions. Statistical models are applied to portfolio data to identify risk patterns. Analytics capability = high.

Stage 5: Decision intelligence Analytics are embedded into portfolio governance. Scenario modelling tools allow leadership to test sequencing decisions before committing. AI-assisted analysis identifies emerging risks and patterns faster than human review alone. Analytics capability = advanced.

Most large organisations should be targeting Stage 3 or 4. Stage 5 is achievable but requires significant investment in both tooling and analytical capability within the change function.

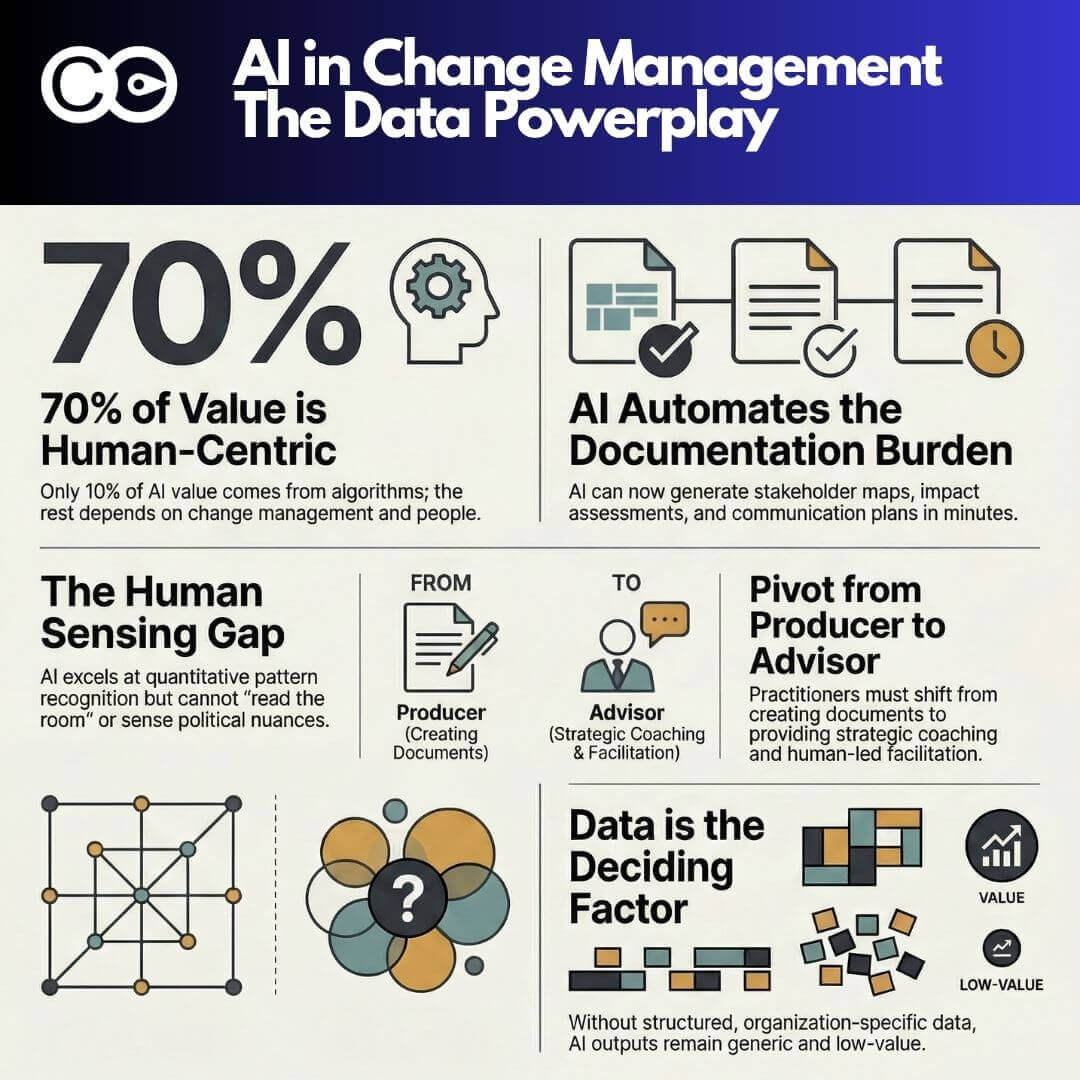

The AI dimension: what has changed since 2022

The past three years have fundamentally changed what is achievable in change analytics. Large language models can now summarise open-text survey responses at scale, identify sentiment patterns across stakeholder groups, and flag emerging risks in qualitative data that would previously have required hours of manual analysis.

Gartner’s 2024 analytics research identifies “decision intelligence” as the key capability organisations need to develop, moving beyond data collection and visualisation toward using data to actively inform and improve decisions. For change functions, this means building the capacity to ask predictive questions: given this programme’s impact profile, readiness trajectory, and the current portfolio load on this group, what is the probability of achieving adoption targets on schedule?

This is not science fiction. Purpose-built change management platforms are already incorporating these capabilities. The constraint is not the technology , it is whether the change function has built the data infrastructure and analytical methodology to feed the models meaningful inputs.

Practical tools for building change analytics capability

Building change analytics capability does not require a data science team. It requires three things: a commitment to consistent data collection, a methodology for analysis, and a platform that makes aggregation practical.

For organisations at Stage 1 or 2, the starting point is standardising the data that is collected across programmes. A consistent change impact assessment template, a standard adoption survey instrument, and a single place where programme data is stored are the foundations everything else builds on.

For organisations at Stage 3 and above, purpose-built platforms like Change Compass provide the infrastructure to aggregate cross-portfolio data, visualise cumulative change load by stakeholder group, and track adoption metrics in real time without manual compilation. The weekly demo is a practical way to see what portfolio-level change analytics looks like in practice.

The analyst role: who owns change analytics?

As change analytics capability matures, the question of ownership becomes important. In most change functions, analytics work is done by change managers as a secondary responsibility. This works at Stage 2, but breaks down at Stage 3 and above.

Dedicated change analyst roles are increasingly common in large enterprise change functions. The change analyst focuses on data collection, methodology design, reporting, and the translation of data into decision-ready insights. This role sits at the intersection of change management and data analysis , and it is distinctly different from either.

Organisations that are serious about building analytics capability typically find that one dedicated change analyst serving a team of four to six change managers delivers returns that more than justify the investment, in the form of better-targeted change management effort and more credible reporting to leadership.

Where the return comes from

Change analytics capability generates return in three ways.

First, it improves the allocation of change management effort. When you can see which groups face the highest impact and the greatest adoption risk, you can direct scarce practitioner time to where it matters most rather than spreading it evenly across all programmes.

Second, it reduces adoption failures. Early warning signals , declining survey confidence, usage data below threshold, support ticket spikes , allow interventions before adoption problems become adoption failures. The cost of an intervention in week three is a fraction of the cost of a go-live failure in month six.

Third, it builds the case for the change function itself. Prosci research documents that organisations with excellent change management are significantly more likely to meet their objectives. Analytics capability is what turns that generalisation into a specific, defensible claim about your organisation’s change function.

Frequently asked questions

What is change analytics capability?

Change analytics capability is an organisation’s ability to systematically collect, analyse, and act on data about the change it is managing. It spans data infrastructure, analytical methodology, reporting design, and , critically , the integration of change data into actual portfolio and programme decisions. A change function with strong analytics capability can demonstrate adoption trends, flag saturation risks, and make the case for resourcing with evidence.

What data should a change analytics function collect?

The most valuable data sources are change impact assessment data (which groups, how severely, across which dimensions), stakeholder readiness survey results, adoption metrics (system usage, process adherence, self-reported confidence), and programme timeline data. HR population data is also essential for segmenting results by group, geography, or role type.

How does change analytics differ from project reporting?

Project reporting tracks whether activities have been completed: training delivered, communications sent, workshops run. Change analytics tracks whether change is actually happening: adoption rates, readiness levels, cumulative change load, sentiment trends. The first tells you what the change team has done. The second tells you whether it is working.

Do you need specialist tools for change analytics?

You do not need specialist tools to start. A consistent data collection approach and a shared repository are enough to get to Stage 2 or 3. But managing portfolio-level change data across ten or more concurrent programmes without purpose-built tooling quickly becomes unviable. Platforms designed for enterprise change management provide the aggregation, visualisation, and real-time tracking that make higher maturity levels practical.

How long does it take to build change analytics capability?

Moving from Stage 1 to Stage 3 , from no analytics to a functional portfolio view , typically takes six to twelve months of consistent effort, assuming leadership support and access to appropriate tools. The main constraint is not technology but discipline: building the habit of consistent data collection across all programmes, regardless of size or complexity.

References

- Gartner. (2025). Gartner Identifies Top Trends in Data and Analytics for 2025. https://www.gartner.com/en/newsroom/press-releases/2025-03-05-gartner-identifies-top-trends-in-data-and-analytics-for-2025

- Gartner. (2024). Gartner Identifies the Top Trends in Data and Analytics for 2024. https://www.gartner.com/en/newsroom/press-releases/2024-04-25-gartner-identifies-the-top-trends-in-data-and-analytics-for-2024

- Gartner. (2024). Gartner Says Finance Leaders Should Factor Change Fatigue into Project Planning. https://www.gartner.com/en/newsroom/press-releases/2024-02-27-gartner-says-finance-leaders-should-factor-change-fatigue-into-project-planning

- Prosci. (2024). The Correlation Between Change Management and Project Success. https://www.prosci.com/blog/the-correlation-between-change-management-and-project-success

- McKinsey & Company. (2024). Change is changing: How to meet the challenge of radical reinvention. https://www.mckinsey.com/capabilities/people-and-organizational-performance/our-insights/change-is-changing-how-to-meet-the-challenge-of-radical-reinvention