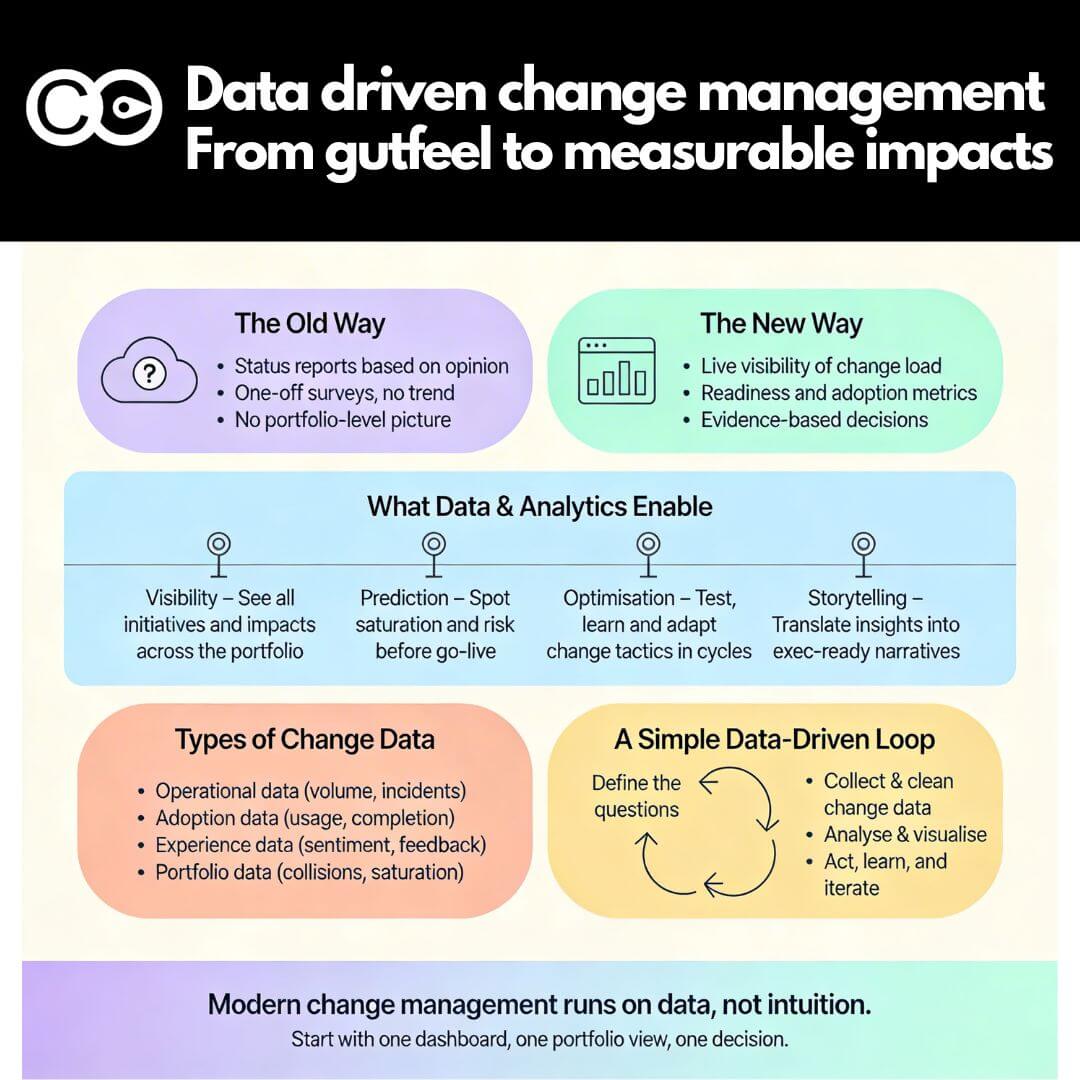

Ask most change managers what data they collect, and the answer tends to follow a familiar pattern: training completion rates, survey scores, maybe a post-go-live adoption dashboard. Ask them what they do with it, and the answer is often some version of “report upward.”

That is the core of change management’s analytics problem. The discipline has spent decades developing sophisticated frameworks for designing and delivering change. But its relationship with data has remained surprisingly unsophisticated: mostly retrospective, mostly lagging, and mostly in service of accountability rather than insight.

The organisations pulling ahead are doing something structurally different. They are not just measuring change outcomes: they are measuring change conditions. They are shifting from “did the change stick?” to “can we see the risk before it hits?” That shift, from retrospective reporting to diagnostic analytics, is what separates a modern change function from one that is perpetually reactive.

This article maps the four analytics capabilities that define a mature change function, explains why most organisations are still trapped at capability level one, and gives you a practical framework for building upward from where you are now.

Change management’s measurement problem runs deeper than most realise

The standard critique of change management measurement is that it is too qualitative. Change teams rely on stakeholder feedback, readiness assessments, and subjective manager observations, none of which produce the hard numbers that executives find credible.

That critique is valid, but it misses the more fundamental issue. The problem is not just that change data tends to be soft. It is also that even when change teams collect quantitative data, they tend to collect the wrong kind.

The metrics most change functions track almost universally fall into the same category:

- Training completion percentages

- Survey response rates

- Adoption percentages at go-live

- Post-implementation satisfaction scores

- Number of communications sent or stakeholder meetings held

Every one of these is a lagging indicator. They tell you what happened after the fact. A low adoption rate at go-live does not help you prevent the problem: it confirms it has already occurred. By the time the post-implementation survey reveals high resistance levels, the delivery window has passed.

According to Deloitte’s Global Human Capital Trends research, 71% of organisations view people analytics as high priority, yet only 8% report having usable data and just 15% have deployed meaningful HR and talent scorecards for line managers. The gap between aspiration and analytical capability is striking, and it is particularly acute in change management, which has historically sat at the edges of both the HR and project delivery functions rather than squarely in either.

The opportunity is significant precisely because the bar is low. Organisations that build genuine analytical capability in change management are not competing against a high standard. They are differentiating themselves from a default state of measurement that is mostly backward-looking and mostly decorative.

The leading versus lagging indicator divide in change management

The distinction between leading and lagging indicators is well established in performance management but underutilised in change management. Understanding the difference, and actively choosing to build leading indicator capability, is the single most important analytical shift a change function can make.

What lagging indicators look like in practice

A lagging indicator measures an outcome after the fact. These are useful for evaluation and accountability: they tell you whether the change succeeded. Common lagging indicators in change management include:

- Final adoption rate at go-live or 30 days post-launch

- Benefits realised at 6 or 12 months post-implementation

- Post-implementation employee satisfaction or Net Promoter Score

- Productivity recovery time following a major system change

- Training completion rates captured at project close-out

Lagging data is easy to collect because it surfaces naturally through project close-out activities and post-implementation reviews. Most change functions have a reasonable supply of it. The problem is that it arrives too late to act on.

What leading indicators look like in practice

A leading indicator measures a condition that predicts an outcome. These tell you whether the change is likely to succeed while there is still time to intervene. In change management, the most valuable leading indicators include:

- Change load on a given team or business unit during a defined window

- Readiness scores tracked weekly in the four weeks before go-live

- Manager capability and engagement assessed at project initiation

- Degree of collision between concurrent initiatives landing on the same group

- Early adoption signals captured in the first two weeks post-launch

AIHR’s research on change management metrics identifies fifteen distinct categories of change management measurement. The majority are lagging indicators. The leading indicators that receive the least attention, and offer the most predictive value, relate to change saturation, manager readiness, and early adoption signals captured before go-live rather than after.

The practical implication is direct: if your change analytics consist entirely of post-implementation reporting, you have accountability data but not insight data. You can explain what happened, but you cannot reliably predict or prevent what is about to happen. That is a significant capability gap in an environment where the average employee is navigating ten concurrent enterprise changes per year, up from two in 2016, according to Gartner data cited by Harvard Business Review.

Four analytics capabilities that define a mature change function

A mature change analytics capability is not built all at once. It develops through four distinct levels, each building on the previous. Most organisations sit at level one or two. The distinction between levels three and four is where genuine competitive advantage in change delivery becomes visible.

Change load and capacity measurement

The foundational analytics capability for any change function is a consolidated view of change load across the portfolio: how many changes are landing on each business unit, each role group, and each leader in any given period.

This sounds straightforward. In practice, it is genuinely difficult. Projects are managed in silos. Change impact data lives in individual project files. Nobody aggregates it at the portfolio level until a change collision has already occurred and someone needs to explain why two major initiatives hit the same team in the same fortnight.

To build this capability, a change function needs three things:

- A shared taxonomy for categorising and quantifying change impacts

- A system for aggregating impact data across all concurrent initiatives

- A view that is updated regularly enough to be useful for scheduling decisions

When this capability is in place, change teams can provide something that most executive sponsors have never seen: a demand-versus-capacity view of change for each part of the business. That single view transforms the change function’s credibility in portfolio conversations.

Readiness and sentiment analytics

The second capability is the ability to measure, track, and predict readiness and sentiment at multiple points in a change lifecycle: not just at launch and not just at go-live, but continuously.

Pulse surveys, manager-level readiness assessments, and digital adoption signal data (where available) all contribute to this view. The critical shift is from one-off measurement to continuous tracking. Research cited by Freshworks indicates that organisations using continuous feedback achieve 30 to 40 per cent higher adoption rates than those measuring quarterly or annually.

The analytical value of continuous readiness data is not the individual snapshot: any single readiness score has limited meaning. The value is the trend. A team whose readiness score is low but improving steadily three weeks before go-live is in a very different position from a team whose score is low and static. A change team with access to trend data can make proactive resourcing decisions. A change team with only snapshot data can only react.

Benefits realisation tracking

The third capability is the one most closely tied to senior leader confidence in the change function: measuring whether project benefits are actually being realised, and attributing that outcome to the quality of change management.

Prosci’s research across thousands of practitioners demonstrates that organisations which clearly define success metrics before a change begins and measure performance against them throughout delivery increase their odds of meeting or exceeding their objectives by up to five times. That is not a marginal improvement in delivery quality. It is a structural shift in outcomes directly traceable to measurement rigour.

The challenge is attribution. Building it requires a four-step discipline that most change teams skip entirely:

- Agree on two or three measurable business outcomes with the project sponsor at initiation

- Capture a quantified baseline before the change begins

- Track progress against that baseline at defined milestones during delivery

- Measure the outcome at three and six months post-implementation and document the delta

Most organisations skip step two, which makes steps three and four meaningless. Without a baseline, you cannot demonstrate that the change was responsible for the improvement, or diagnose why it was not.

Predictive risk modelling

The fourth and most advanced capability is using historical change portfolio data to model delivery risk before it materialises. Which combinations of change volume and complexity predict delivery failure? Which business units have historically absorbed change well, and which have consistently underperformed adoption targets? What leading indicators in the first four weeks of an initiative predict its six-month outcome?

This is the analytics territory that most change functions have not yet entered. It requires sufficient historical data, a consistent measurement framework applied across multiple projects over time, and the analytical infrastructure to interrogate patterns in that data. It is not achievable without building capabilities one through three first.

But the organisations that get there acquire something genuinely rare: the ability to advise executive teams on change portfolio risk before it shows up in delivery failures. That capability repositions the change function from a delivery support service into a strategic risk management function.

Building your change analytics capability: a practical starting point

Moving from a lagging-indicator approach to a genuinely diagnostic one does not require a large technology investment or a complete restructure of how change is managed. It requires three sequenced decisions about what to measure and what to do with the data.

Step 1: Map your change load

Before anything else, create a consolidated view of the change portfolio across all concurrent initiatives. Use whatever data already exists in project registers, programme plans, and change impact logs. The goal at this stage is simply visibility: a view that makes the total change demand on each part of the business legible to a decision-maker.

Practical actions to get started:

- List every active initiative affecting your top three most change-affected business units

- Estimate the change impact level (high, medium, low) for each and map it by quarter

- Identify any periods where high-impact changes overlap on the same team

Even a rough version of this view will surface problems you did not know existed.

Step 2: Add readiness trending

Introduce pulse surveys or structured readiness check-ins at key milestone points across your projects, not just at launch and go-live. Standardise the questions enough that you can compare readiness across projects and build a portfolio-level view over time.

What to standardise:

- Three to five consistent questions about manager confidence, employee awareness, and capacity to absorb the change

- A consistent scoring scale so trends are comparable across initiatives

- A schedule: measure at project kick-off, midpoint, four weeks pre-launch, and go-live

Step 3: Define outcome metrics at project initiation

Before the next major initiative begins, agree with the project sponsor on two or three specific, measurable business outcomes that the change will deliver. Capture a baseline now. Schedule post-implementation measurement at three and six months.

Each of these steps can be executed with basic tools. They require discipline and consistency more than technology. But each one generates data that did not previously exist, and that data compounds into the historical record that eventually enables predictive modelling.

Common traps when introducing data to change management

Measuring activity rather than impact

Counting communications sent, training sessions delivered, and stakeholder meetings held tells you whether the change team was busy. It does not tell you whether any of it worked. Activity metrics have their place in project management, but they should never be the primary lens through which change effectiveness is assessed. If your change dashboard is full of input metrics and empty of outcome metrics, you are reporting effort, not performance.

Using data for accountability rather than insight

When data is collected primarily to report upward to sponsors and steering committees, it tends to get cleaned and smoothed before it reaches the audience. Genuinely useful change data surfaces inconvenient truths: a team is not ready, a manager is not engaged, a timeline is unrealistic given current change load. Creating the conditions in which data is used to diagnose and improve rather than to demonstrate compliance is a cultural challenge as much as a technical one.

Waiting for perfect data before acting

Many change teams delay building measurement practices because they feel they lack the right tools, the right mandate, or sufficient data quality. The reality is that imperfect, consistent data collected over time is far more valuable than perfect data collected once. A readiness score captured with a five-question pulse survey every fortnight, applied consistently across every initiative, is worth more than a comprehensive assessment done once at project launch and never revisited.

Treating analytics as a separate workstream

Change analytics is most powerful when it is integrated into the rhythm of change delivery: regular portfolio reviews, milestone check-ins, and initiative retrospectives. When measurement is treated as a separate reporting obligation, it tends to get deprioritised when delivery pressure mounts, which is exactly when the insight would be most useful.

How digital tools make change analytics actionable

The four capabilities described above are possible to build with spreadsheets and manual aggregation, but they are difficult to sustain at scale. The coordination overhead of pulling change load data from a dozen project plans, standardising it, and producing a portfolio view that is current enough to be useful becomes prohibitive when the portfolio grows beyond six or eight concurrent initiatives.

Purpose-built platforms such as Change Compass are designed specifically to automate the aggregation and visualisation work that makes portfolio-level change analytics possible. When impact data, readiness scores, and timeline information are captured in a shared system, the portfolio view is always current. Trend data is available without manual compilation. Risk signals surface in time to act on them rather than explain them.

The technology does not substitute for the analytical thinking. Understanding what the data means and what to do about it still requires experienced change practitioners. But it removes the data management burden that most change teams currently carry manually, freeing capacity for the work that actually requires human judgement.

The diagnostic shift is the real opportunity

The most important thing a change function can do with data is not produce better reports. It is ask better questions. Not “did our training achieve high completion rates?” but “which teams show early adoption signals that predict full utilisation?” Not “how did our last change land?” but “which teams are carrying a change load that puts the next initiative at risk before it even starts?”

That diagnostic shift, from measuring what happened to anticipating what is about to happen, is what data and analytics in change management actually makes possible. The tools and techniques are available. The data is largely there, waiting to be aggregated. The missing ingredient, in most organisations, is the decision to treat change as something that can be measured, modelled, and managed like any other business risk.

The organisations that make that decision are not just running better change programmes. They are building an institutional capability that compounds over time, each project adding to a data asset that makes the next one more predictable, more manageable, and more likely to deliver the benefits it promised.

Frequently asked questions

What is change management analytics?

Change management analytics is the practice of collecting, aggregating, and interpreting data about change activity, employee readiness, change portfolio load, and project outcomes to inform decision-making during and across organisational change initiatives. It encompasses both lagging indicators (outcomes after the fact) and leading indicators (conditions that predict outcomes).

What is the difference between leading and lagging indicators in change management?

Lagging indicators measure outcomes after a change has been delivered, such as final adoption rates, benefits realised, and post-implementation satisfaction scores. Leading indicators measure conditions that predict those outcomes, such as current change load on a team, readiness scores trending upward or downward before go-live, and manager engagement levels in the early stages of delivery. Leading indicators allow change teams to intervene proactively; lagging indicators only enable retrospective evaluation.

How do organisations measure change saturation?

Change saturation is typically measured by aggregating the change impacts from all concurrent initiatives and mapping them to the business units and role groups they affect. The resulting view shows cumulative change demand per team during a given period, which can be compared against historical absorption capacity and change readiness data. Most organisations do not measure saturation systematically, which is why change collisions are frequently discovered after they have already affected delivery.

What metrics should a change management function track?

A mature change function tracks metrics across four categories: change load and capacity (how much change is hitting each part of the business), readiness and sentiment (are affected teams prepared to adopt the change), delivery execution (is the change being managed well), and benefits realisation (are the business outcomes being achieved). The balance should shift toward more leading indicators and fewer lagging ones as analytical maturity grows.

Can small change teams realistically implement analytics practices?

Yes. The most valuable analytics practices, particularly change load mapping and continuous readiness tracking, can be implemented with minimal tooling. What they require is consistency: applying the same measurement framework across every initiative, capturing a baseline before each change begins, and aggregating individual project data into a portfolio view. Small teams often start with a shared spreadsheet and evolve toward purpose-built tooling as the portfolio grows and the value of consolidated data becomes clear to sponsors.

References

- Deloitte. “People Analytics in HR — Global Human Capital Trends.” https://www.deloitte.com/us/en/insights/topics/talent/human-capital-trends/2017/people-analytics-in-hr.html

- Prosci. “Metrics for Measuring Change Management.” https://www.prosci.com/blog/metrics-for-measuring-change-management

- AIHR. “15 Important Change Management Metrics To Track.” https://www.aihr.com/blog/change-management-metrics/

- Harvard Business Review. “Employees Are Losing Patience with Change Initiatives.” May 2023. https://hbr.org/2023/05/employees-are-losing-patience-with-change-initiatives

- Freshworks. “12 Change Management Metrics and KPIs to Track in 2025.” https://www.freshworks.com/change-management/metrics/

- The Change Compass. “How to Measure Change Management Success: 5 Key Metrics That Matter.” https://thechangecompass.com/how-to-measure-change-management-success-5-key-metrics-that-matter/