Peter Drucker’s principle, that you can only manage what you measure, has been cited in management contexts for decades. Applied to change management, it exposes one of the field’s most persistent problems. Most organisations are measuring the wrong things. They are measuring activity: communications sent, training sessions delivered, stakeholder engagement meetings held. These metrics demonstrate that change management work is being done. They do not demonstrate that change is happening.

The consequence of measuring the wrong things is that you end up managing the wrong things. Change functions that track activity metrics optimise for activity. They ensure training completion rates are high. They send communications on schedule. They hold engagement sessions. And they are routinely surprised when adoption at go-live is lower than expected, because the thing they were actually trying to achieve, a genuine shift in how people work, was never the thing they were measuring.

Getting measuring change management right requires a deliberate shift: from activity metrics to adoption metrics, from go-live snapshots to trend data over time, and from programme-level reporting to portfolio-level visibility. Each shift is technically straightforward. Collectively, they transform the information a change function has available and the decisions it enables.

Why activity metrics dominate and why they mislead

Activity metrics are appealing for two reasons. They are easy to collect, and they show progress in real time. The number of stakeholders briefed grows with each workshop held. Training completion percentage climbs as learning modules are finished. Communication send dates tick off against the plan.

The problem is that these metrics tell you about inputs, not outcomes. A training completion rate of 95% tells you that 95% of employees sat through a training module. It tells you nothing about whether they are working differently. A stakeholder briefing tells you that a conversation happened. It does not tell you whether the stakeholder is now an active advocate for the change, actively resistant to it, or somewhere in between.

AIHR’s guide to change management metrics draws a clear distinction between process metrics, which track activities completed, and outcome metrics, which track whether the change is actually taking hold. Process metrics are necessary but not sufficient. Without outcome metrics, a change function is flying blind on the question that matters: is the change happening?

The deeper problem with activity-focused measurement is what it rewards. A change team assessed primarily on whether communications are on schedule and training is completed will optimise for those things. It will not necessarily prioritise the harder, less quantifiable work of identifying and removing the structural barriers to adoption, coaching managers through their own uncertainty, or advocating for performance framework changes that align incentives with the new ways of working. Those interventions require time and attention. Without metrics that value them, they get crowded out.

The three levels of change measurement

A robust measuring change management framework operates at three levels, each of which answers a different question.

Level 1: Adoption measurement

The foundational level tracks whether people are actually changing how they work. Adoption metrics vary by change type but typically include:

- Active usage rates for new systems and tools, measured at the role-group level, not just organisation-wide

- Behavioural indicators specific to the change: are decisions being made using the new process? Are outputs conforming to the new standard?

- Error rates and workaround patterns, which indicate where the new way of working is breaking down in practice

- Self-reported proficiency, gathered through structured check-ins rather than end-of-training surveys

Adoption measurement requires a baseline. You need to know what behaviour looked like before the change to assess whether it has shifted. This sounds obvious but is often skipped, leaving change functions unable to demonstrate movement even when significant movement has occurred.

Level 2: Readiness and leading indicators

The second level focuses on the conditions for adoption rather than adoption itself. These are leading indicators that predict future adoption outcomes:

- Manager confidence and capability in supporting the change at team level

- Stakeholder sentiment and the degree to which key influencers are actively supporting versus passively or actively resisting

- Awareness and understanding scores, which indicate whether employees know what is changing, why, and what is expected of them

- Access to support, whether employees know where to go when they encounter difficulty with the new way of working

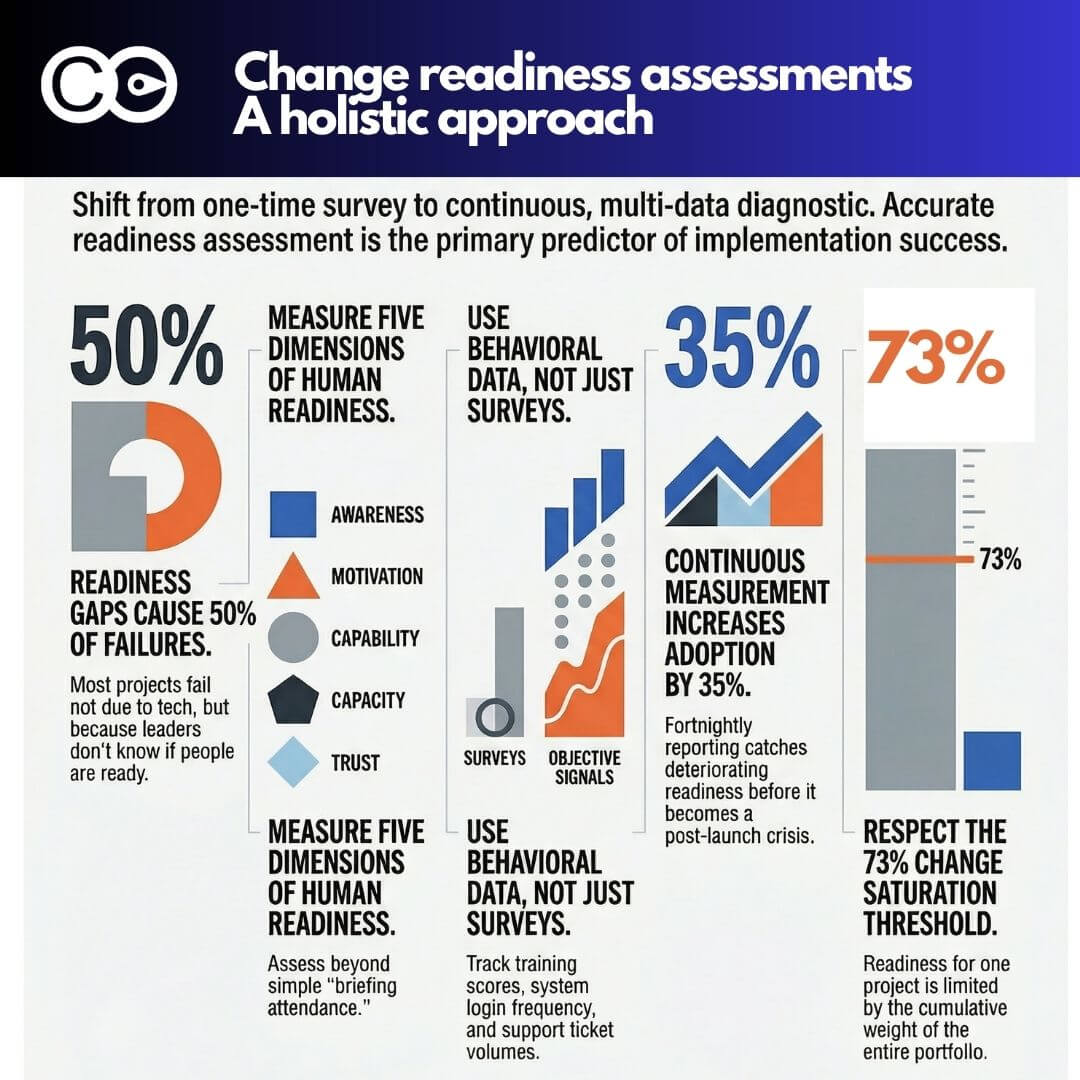

Leading indicators are valuable because they can identify problems while there is still time to intervene. An adoption measurement taken at go-live tells you what happened. Leading indicators taken four weeks before go-live give you the opportunity to change what happens.

Level 3: Business outcome linkage

The third level connects change management work to business results. This is the most difficult level to measure and the most persuasive for executive audiences.

Business outcome metrics vary by change programme. For a technology implementation, they might include productivity measures or error rates in the affected process. For an organisational restructure, they might include time-to-effectiveness for teams in new configurations. For a culture change programme, they might include customer satisfaction or employee engagement trends.

The practical challenge at this level is attribution. Business outcomes are affected by many things beyond change management quality. The most effective approach is not to claim sole attribution, but to demonstrate contribution through correlation and comparison: how do adoption levels compare between groups that received intensive change support and those that received standard support? How does benefits realisation timing track against adoption curve progress?

Common mistakes in change measurement frameworks

Several patterns recur in how change measurement frameworks go wrong, beyond the activity-versus-outcome problem.

Measuring at go-live rather than over time. Change adoption is not a moment; it is a curve. Most organisations take a readiness snapshot at go-live and a benefits measurement six months later. The period in between, when adoption is building, stalling, or reversing, is often invisible. Organisations that measure adoption at monthly intervals across the first six months after go-live consistently identify problems that go-live-only measurement misses.

Using the same metrics for all change types. A technology adoption and a cultural change require different measurement approaches. A process change and an organisational restructure have different adoption timelines. Generic measurement frameworks applied uniformly across a change portfolio produce data that is too coarse to act on.

Reporting averages across heterogeneous groups. An organisation-wide adoption rate of 68% might mask a rate of 90% in one business unit and 35% in another. The action required in those two units is entirely different. Effective change measurement reports adoption by employee group, role level, and geography rather than flattening everything to a single number.

Treating employee survey responses as objective data. Pulse surveys and change readiness assessments reflect what employees are willing to say, which is shaped by psychological safety, survey fatigue, and the perceived consequences of honest feedback. They are useful inputs but should be triangulated with behavioural data where possible.

Portfolio-level measurement: the view that matters most

Individual programme measurement, even done well, produces a fragmented picture. A change function that can tell you adoption rates for each of its ten active programmes cannot necessarily tell you the cumulative change burden on specific employee groups, whether the portfolio as a whole is delivering adoption at the rate the organisation’s transformation strategy requires, or where the systemic patterns in adoption performance suggest structural capability issues.

Portfolio-level measurement addresses these gaps. It requires:

- A consistent measurement taxonomy across programmes, so that adoption data from different initiatives can be aggregated meaningfully

- A portfolio adoption dashboard that shows trend lines by employee group across all active programmes, not just point-in-time scores for individual initiatives

- Comparative analysis across programmes to identify patterns: are certain types of change consistently underperforming? Are certain employee groups consistently showing lower adoption rates regardless of which programme is being measured?

The comparison question is particularly valuable. If a specific business unit shows below-target adoption across five consecutive change programmes, that is a portfolio signal, not a programme signal. The root cause is more likely to be leadership capability, change saturation, or structural friction in that unit than a problem with any specific initiative. Programme-level measurement cannot surface this insight. Portfolio-level measurement can.

Tools such as The Change Compass are purpose-built for this portfolio measurement challenge: aggregating adoption data across programmes, tracking cumulative impact by employee group, and generating the portfolio-level view that enables the conversations with business leadership that individual programme reporting cannot support.

Making the case for better measurement

For change leaders who need to build the internal case for investing in measurement capability, the most compelling argument is opportunity cost. What decisions is the organisation currently unable to make, or making badly, because of gaps in change measurement data?

Specific examples that resonate with executive audiences include: the inability to predict which programmes are at risk of underperforming on adoption before go-live; the absence of data to support a sequencing decision when two major programmes are planned to land simultaneously on the same employee group; and the difficulty of demonstrating the contribution of change management investment to business outcomes when outcomes are tracked but change quality is not.

These are not abstract arguments. They describe real decisions that organisations make with inadequate information every quarter. A measurement framework that closes these gaps has demonstrable decision value, not just methodological value.

A practical starting point

Building a full three-level measurement framework from scratch is a multi-year effort. For most change functions, the most valuable immediate step is to add a single adoption metric to at least one current programme that is not currently being measured.

The most useful first adoption metric is typically active usage rate by role group, tracked monthly for the first six months post go-live, compared against a baseline taken in the last month before go-live. This single data series will generate more actionable insight about whether the change is landing than any number of communications-sent or training-completed metrics.

From there, the measurement framework can be built progressively: adding leading indicators, extending to business outcome linkage for strategic programmes, and eventually aggregating to portfolio level as the methodology matures. The principle at each stage is the same. Measure what you are trying to achieve, not what is easiest to count.

Frequently asked questions

What should change management metrics actually measure?

Effective change metrics measure whether behaviour has changed, not whether change activities were completed. The primary outcomes to measure are adoption rate by role group, which tracks whether people are working in the new way; readiness and capability scores, which are leading indicators of adoption; and business outcome contribution, which connects change quality to the results the change programme was designed to achieve.

What is the difference between change management activity metrics and adoption metrics?

Activity metrics track inputs: communications sent, training completed, stakeholder briefings held. Adoption metrics track outputs: whether employees in specific roles are consistently working in the new way. Activity metrics are easy to collect and show progress in real time, which is why they dominate most change measurement frameworks. The problem is that high activity metrics and low adoption outcomes frequently coexist, because completing training and changing behaviour are different things.

How often should you measure change adoption?

More frequently than most organisations do. A readiness baseline before go-live, monthly adoption tracking for the first six months post go-live, and a benefits realisation review at months six and twelve gives a meaningful picture of how adoption is progressing. Organisations that measure adoption only at go-live miss the adoption curve in its entirety and have no early warning of problems that could be addressed with timely intervention.

How do you measure the ROI of change management?

The most practical approach for most organisations is to track adoption levels and benefits realisation timing across programmes where change management was applied, and compare them to a realistic alternative scenario or historical baseline. Prosci’s research consistently finds that programmes with effective change management achieve significantly better adoption and benefits realisation than those without. Building an internal evidence base over multiple programmes creates a credible case for change management ROI that external benchmarks alone cannot provide.

What is portfolio-level change measurement?

Portfolio-level change measurement aggregates adoption data, impact data, and readiness indicators across all active change programmes to give a view of how change is landing across the organisation as a whole. It enables comparisons across programmes, identification of systemic adoption patterns, and cumulative load analysis by employee group. It is the level of measurement that enables the strategic conversations with business leadership that programme-level reporting cannot support.

References

- AIHR. 15 Important Change Management Metrics To Track in 2026. https://www.aihr.com/blog/change-management-metrics/

- Prosci. Metrics for Measuring Change Management. https://www.prosci.com/blog/metrics-for-measuring-change-management

- Prosci. The Correlation Between Change Management and Project Success. https://www.prosci.com/blog/the-correlation-between-change-management-and-project-success

- Freshworks. 12 Change Management Metrics and KPIs to Track in 2025. https://www.freshworks.com/change-management/metrics/

- OCM Solution. 2025-2026 Organizational Change Management Trends Report. https://www.ocmsolution.com/organizational-change-management-ocm-trends-report/